12 September 2016: Introduction to Semantics

Handout: 105 Impcore Semantics, Part 1

Discussion: Two things you learned last class.

Topic of study: the stuff software is made of

Conclusion: make it easier to write programs that really work

Your language can make you or break you. - Compiler assignments at CMU

Cultural enrichment: Paul Graham, especially the "Blub paradox"

What if the course were called "Cooking"?

You'd need to know something about cooking works (THEORY)

Want to make bread? How does yeast work?

Want to avoid getting sick? Under what conditions do bacteria thrive?

Want to develop flavor? What triggers the Maillard reaction?

You'd want to know something about what makes food palatable (PRACTICE)

French cuisine: mirepoix (onions, carrots, celery cooked in butter)

Base sauces (the 5 "mother sauces" of Western cooking); Know what they are and when to use them.

The same thing for programming languages:

How programming langauges work: MATH (THEORY)

What makes programming languages usable them usable: Great features for writing CODE (PRACTICE)

Understanding core ideas of Programming Languages that manifest in many languages

The marriage of math and code

Principal tools: Induction and recursion

New ways of thinking about programming

Double your productivity

Become a sophisticated consumer, aware of old wine in new bottles

Learn new languages quicklly

Bonus: preparation for advanced study

(Course serves everyone from recent 15/61 grads to grad students)

If you're going to enjoy the course,

Intellectual tools you need to understand, use, and evaluate languages effectively

Notations of the trade (source of precision, further study)

Learn by doing:

Just as intellectually challenging as COMP 40, but in an entirely different direction.

Language shapes your thinking

There aren't that many great features, and you will see them over and over

You'll choose features, and therefore languages, to fit your needs

Some are more powerful than others

In Comp 105,

Only the most powerful features need apply

We explode your brain so you can think differently

You'll know you're doing it right if at first your head hurts terribly, then you have a breakthrough moment and it all seems pleasant afterwards

To say more with less (expressive power):

To promote reliability and reuse:

To describe it all precisely:

You must get Norman's book (Both Volumes!!!)

You won't need the book on ML for about a month

Homework will be frequent and challenging:

Both individual and pair work:

Arc of the homework looks something like this:

| Assignment | Difficulty |

|---|---|

| impcore | one star |

| opsem | two stars |

| scheme | three stars |

| hofs | four stars |

And it's more or less four-star homeworks from there on out.

Lesson: Don't make decisions based on the first couple of homeworks!

We don't cover everything in lecture

Lecture is for just the hard parts

We'll talk very little about the code (just the interesting bits)

In a 100-level course, you are responsible for your own learning

Course evaluations from previous years: a few students want everything gone over in lecture. That's not how things work in real life, and that's not how things work here. We point you in the right direction and identify traps and pitfalls, and we find good problems for you to work on.

If you're expecting to see everything in lecture, you have a couple of choices: change your expectations, or take the course next year when you will have more experience and will be more prepared to manage your own learning.

Call me "Kathleen," "Professor Fisher", or "Profesor."

Goal: Eliminate superficial differences

Imperative programming with an IMPerative CORE:

Write a function that takes a natural number n and returns true (1) iff all the digits in n are 4's.

Impcore variable definition

Example

(val n 99)Compare

int n = 99;Also, expressions at top level (definition of it)

Impcore expressions

No statements means expression-oriented:

(if e1 e2 e3)

(while e1 e2)

(set x e)

(begin e1 ... en)

(f e1 ... en)Each one has a value and may have side effects!

Functions are primitive (+ - * / = < > print)

or defined with (define f ...).

The only type of data is "machine integer" (deliberate oversimplification)

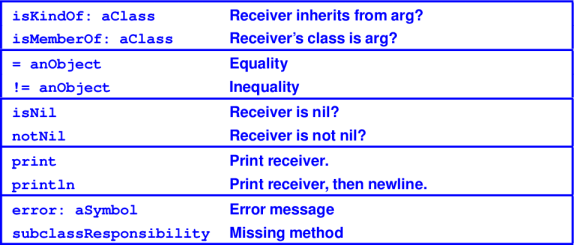

Scopes also called "name spaces"; we will call them "environments" because that's the pointy-headed theory term---and if you want to read some of the exciting papers, pointy-headed theory has to be second nature.

Names known in ``environments"

Ways to talk about meanings of names:

Impcore vars in 2 environments: globals, formals

There are no local variables

Functions live in their own environment (not shared with variables)

Environmental abuse

Abuse of separate name spaces:

-> (val f 33)

33

-> (define f (x) (+ x x))

f

-> (f f)

66Ways a recursive function could decompose a natural number n.

Peel back one (Peano numbers):

n = 0

n = m + 1, m is also a natural numberSplit into two pieces:

n = 0

n = k + (n - k) 0 < k < n (everything gets smaller)Sequence of decimal digits (see study problems on digits)

n = d, where 0 <= d < 10

n = 10 * m + d, where 0 <= d < 10 and m > 0To do your homework problems, which I recommend starting today, you'll need to invent at least one more.

Handout: 105 Impcore Semantics, Part 1

Discussion: Two things you learned last class.

Question: How do we define the meaning of a program?

while (i < n && a[i] < x) { i++ }

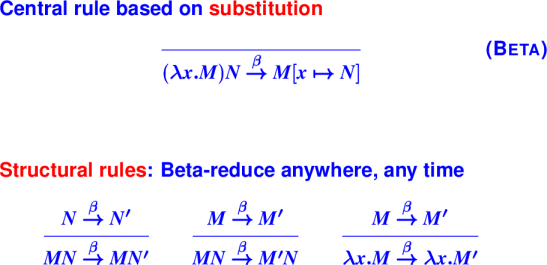

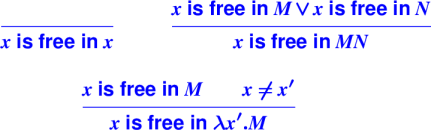

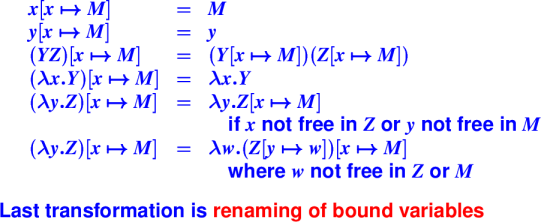

Answer: Inductively

Atomic forms: Assign meaing directly (e.g., constants, variables)

Compound forms: Assign meaning using the meaning of the parts

Programming-languages people are wild about compositionality.

Example of compositionality: PL Syntax (grammar)

(x + y) is a grammatical expression(x - y) is a grammatical expression(x + y) * (x - y) is an expressionExample of compositionality: Natural Language

fish is a noun phrasered is an adjectivered fish is a noun phraseBy design, programming languages more orderly than natural language.

Example of non-compositionality: Spelling/pronunciation in English

fish vs ghotiQuestion: What do we assign meaning to?

Answer: The Abstract Syntax Tree (AST) of the program.

An AST is a data structure that represents a program.

A parser converts program text into an AST.

Question: How can we represent all while loops?

while (i < n && a[i] < x) { i++ }

Answer:

As a data structure:

Norman Ramsey and Geoff Mainland put together some Beamer slides explaining operational semantics for Impcore.

Handout: 105 Impcore Semantics, Part 1

Handout: 105 Impcore Semantics, Part 2

then

e does not contain set then evaluating e does not change ξ.cons cellse does not contain set then evaluating e does not change ξ.setWe can just look at the syntax, or we can make a proof system:

and also

Notice that set is the only construct that changes the environment.

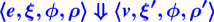

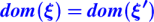

Formally: If e contains no set operation and

then ξ = ξʹ.

Proof by induction on the derivation of

Case Literal: Follows immediately

Case If Then Else: Follows from IH.

Case Apply(+): ???

Lesson: Make sure you consider each case carefully!

Structure of the input drives the structure of the code.

To discover recursive functions, write algebraic laws:

sum 0 = 0

sum n = n + sum (n - 1)Which direction gets smaller?

Code:

(define sum (n)

(if (= n 0) 0 (+ n (sum (- n 1)))))Another example:

exp x 0 = 1

exp x (n + 1) = x * (exp x n)Can you find a direction in which something gets smaller?

Code:

(define exp (x n)

(if (= n 0)

1

(* x (exp x (- n 1)))))Programming with assignments etc

Recursion and composition:

Recursive function boot camp

Two recursive data structures: the list and the S-expression

More powerful ways of putting functions together (compositionality again, and it leads to reuse)

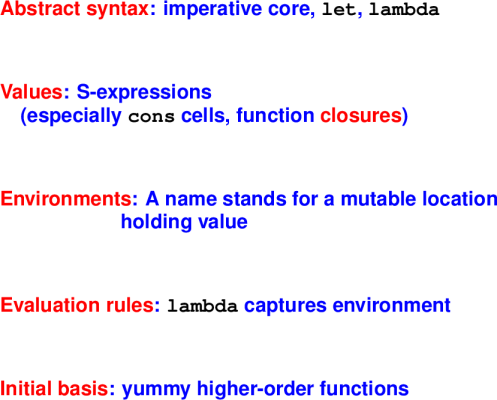

As a lens for understanding, you can ask these questions about any language:

What is the abstract syntax? What are the syntactic categories, and what are the terms in each category?

What are the values? What do expressions/terms evaluate to?

What environments are there? That is, what can names stand for?

How are terms evaluated? What are the judgments? What are the evaluation rules?

What's in the initial basis? Primitives and otherwise, what is built in?

Question 2: what are the values?

Two new kinds of data:

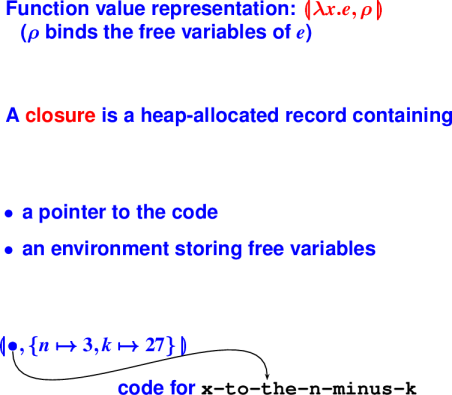

The function closure: the key to "first-class" functions

Pointer to automatically managed cons cell (mother of civilization)

Picture of a cons cell: (cons 3 (cons ( 2 '())))

Values are S-expressions.

An S-expression is either

a symbol 'Halligan 'tufts

a literal integer 0 77

a literal Boolean #t #f

or a list of S-expressions (to a first approximation)

A list of S-expressions is either

the empty list '()

an S-expression followed by a list of S-expressions

Like any other abstract data type, S-Expresions have:

creators that create new values of the type '()

producers that make new values from existing values (cons s s')

mutators that change values of the type (not in uScheme)

observers that examine values of the type

number? symbol? boolean? null? car cdr

N.B. creators + producers = constructors

(cons 'a '()) also written '(a)

(cons 'b '(a))

(cons 'c '(b a))

(null? '(c b a)) equals #f

(cdr '(c b a) equals '(b a)

(car '(c b a) equals 'c

The symbol ' is pronounced "tick." It indicates that what follows is a literal.

What is the representation of

'((a b) (c d))

which can be alternatively written

cons( (cons a (cons b '())) cons( (cons c (cons d '())) '())

What is the representation of

cons('a 'b)

Contrast this representation with the one for

cons('a '())

Both of these expressions are S-expressions, but only cons('a '()) is a list.

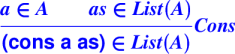

cons cells.Can be defined via inference rules:

Constructors: '(),cons

Observers: null?, pair?, car, cdr (also known as "first" and "rest", "head" and "tail", and many other names)

Sequences a frequently used abstraction

Can easily approximate a set

Can implement finite maps with association lists (aka dictionaries)

You don't have to manage memory

These "cheap and cheerful" representations are less efficient than balanced search trees, but are very easy to implement and work with---see the book.

The only thing new here is automatic memory management. Everything else you could do in C. (You can have automatic memory management in C as well.)

Algebraic laws of lists:

(null? '()) == #t

(null? (cons v vs)) == #f

(car (cons v vs)) == v

(cdr (cons v vs)) == vsCombine creators/producers with observers

Can use laws to prove properties of code and to write better code.

Any list is therefore constructed with '() or with cons applied to an atom and a smaller list.

Example: length

Algebraic Laws for length

length '() = 0

length (cons x xs) = 1 + length xsCode:

(define length (x)

(if (null? x)

0

(+ 1 (length (cdr x)))))Using informal math notation with .. for "followed by" and e for the empty sequence, we have these laws:

xs .. e = xs

e .. ys = ys

(z .. zs) .. ys = z .. (zs .. ys)

xs .. (y .. ys) = (xs .. y) .. ysThe underlying operations are append, cons, and snoc. Which ..'s are which?

But we have no snoc

If we cross out the snoc law, we are left with three cases... but case analysis on the first argument is complete.

So cross out the law xs .. e == xs.

Which rules look useful for writing append?

*** Equations and function for append ***(append '() ys) == ys

(append (cons z zs) ys) == (cons z (append zs ys))

(define append (xs ys)

(if (null? xs) ys

(cons (car xs) (append (cdr xs) ys))))Why does it terminate?

The major cost center is cons because it corresponds to allocation.

How many cons cells are allocated?

Let's rigorously explore the cost of append.

IF

IH ('())

If a in A and IH(as) then IH (cons a as)

THEN

Forall as in List(A), IH(as)

Claim: Cost (append xs ys) = |xs|

Proof: By induction on the structure of xs.

Base case: xs = '()

(append '() ys) returns ys with 0 allocated cons cells. Induction case: xs = (cons z zs)

car xs = z and cdr xs = zs and |xs| = 1 + |zs|

cost (append (cons z zs) ys)) =

cost (cons z (append zs ys)) =

1 + cost (append zs ys) =

By IH, cost (append zs ys) = |zs|

1 + |zs| =

|xs|Conclusion: Cost of append is linear in length of first argument.

'(), cons)car, cdr, null?)appendAlgebraic laws for list reversal:

reverse '() = '()

reverse (x .. xs) = reverse xs .. reverse '(x) = reverse xs .. '(x)And the code?

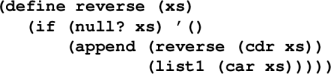

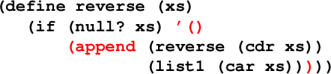

*** Naive list reversal ***

(define reverse (xs)

(if (null? xs) '()

(append (reverse (cdr xs))

(list1 (car xs)))))The list1 function maps an atom x to the singleton list containing x.

How many cons cells are allocated? Let's let n = |xs|.

reverse? A: nappend? A: nreverse? A: n-1, n-2, ... , 0append? A: n-1, n-2, ... , 0cons cells are allocated by call to list1? A: one per call to reverse.Let's try a new algebraic law:

reverse (x .. xs) zs = reverse xs .. x .. zs = reverse xs .. (cons x zs)

reverse '() zs = zsThe code

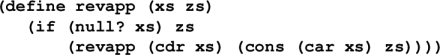

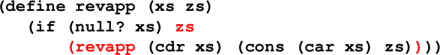

*** Reversal by accumulating parameters ***

(define revapp (xs zs)

(if (null? xs) zs

(revapp (cdr xs)

(cons (car xs) zs))))

(define reverse (xs) (revapp xs '()))The cost of this version is linear in the length of the list being reversed.

Parameter zs is the accumulating parameter.

(A powerful, general technique.)

Implementation: list of key-value pairs

'((k1 v1) (k2 v2) ... (kn vn))Picture with spine of cons cells, car, cdar, caar, cadar.

*** A-list example ***

-> (find 'Building

'((Course 105) (Building Braker)

(Instructor Fisher)))

Braker

-> (val ksf (bind 'Office 'Halligan-205

(bind 'Courses '(105)

(bind 'Email 'comp105-staff '()))))

((Email comp105-staff)

(Courses (105))

(Office Halligan-205))

-> (find 'Office ksf)

Halligan-205

-> (find 'Favorite-food ksf)

()Notes:

*** Laws of assocation lists ***

(find k (bind k v l)) = v

(find k (bind k' v l)) = (find k l), provided k != k'

(find k '()) = '() --- bogus!let binding *** Introduce local names into environment ***

(let ((x1 e1)

...

(xn en))

e)Evaluate e1 through en, bind answers to x1, ... xn

Creates new environment for local use only:

rho {x1 |-> v1, ..., xn |-> vn}Also let* (one at a time) and letrec (local recursive functions)

Note that we really have definititions and it might be easier to read if McCarthy had actually used definition syntax, which you'll see in ML, Haskell, and other functional languages:

*** What McCarthy should have done ***

(let ((val x1 e1)

...

(val xn en))

e)Things that should offend you about Impcore:

Look up function vs look up variable requires different interfaces!

To get a variable, must check 2 or 3 environments.

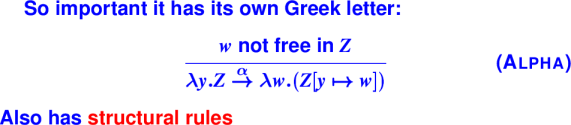

All these problems have one solution: lambda

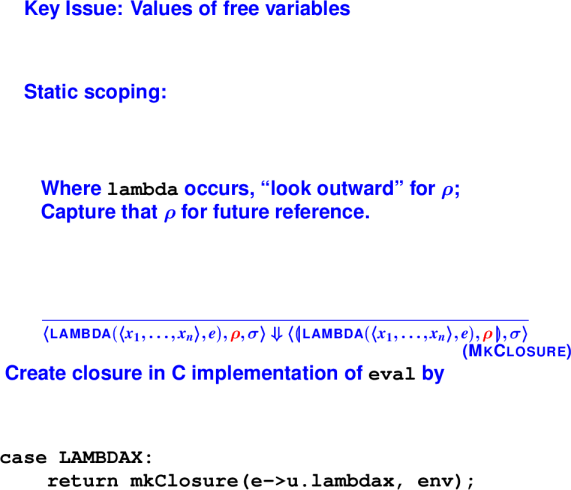

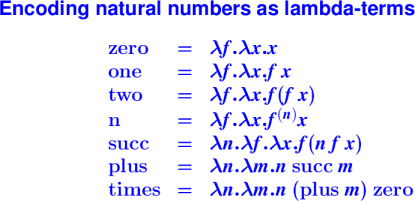

From Church's lambda-calculus:

(lambda (x) (+ x x))"The function that maps x to x plus x"

At top level, like define. (Or more accurately, define is a synonym for lambda that also gives the lambda a name.)

In general, \x.E or (lambda (x) E)

x is bound in EEThe ability to "capture" free variables is what makes it interesting.

Functions become just like any other value.

(lambda (x) (+ x y)) ; means what??What matters is that y can be a parameter or a let-bound variable of an enclosing function.

First example: Finding roots. Given n and k, find an x such that x^n = k.

Step 1: Write a function that computes x^n - k.

Step 2: Write a function that finds a zero between lo and hi bounds.

Picture of zero-finding function. Algorithm uses binary search over integer interval between lo and hi. Finds point in that interval in which function is closest to zero.

Code that computes the function x^n - k given n and k:

*** Function escapes! ***

-> (define to-the-n-minus-k (n k)

(let

((x-to-the-n-minus-k (lambda (x)

(- (exp x n) k))))

x-to-the-n-minus-k))

-> (val x-cubed-minus-27 (to-the-n-minus-k 3 27))

-> (x-cubed-minus-27 2)

-19The function to-the-n-minus-k is a higher-order function because it returns another (escaping) function as a result.

*** No need to name the escaping function ***

-> (define to-the-n-minus-k (n k)

(lambda (x) (- (exp x n) k)))

-> (val x-cubed-minus-27 (to-the-n-minus-k 3 27))

-> (x-cubed-minus-27 2)

-19General purpose zero-finder that works for any function f:

*** The zero-finder ***

(define findzero-between (f lo hi)

; binary search

(if (>= (+ lo 1) hi)

hi

(let ((mid (/ (+ lo hi) 2)))

(if (< (f mid) 0)

(findzero-between f mid hi)

(findzero-between f lo mid)))))

(define findzero (f) (findzero-between f 0 100))findzero-between is also a higher-order function because it takes another function as an argument. But nothing escapes; you can do this in C.

Example uses:

*** Cube root of 27 and square root of 16 ***

-> (findzero (to-the-n-minus-k 3 27))

3

-> (findzero (to-the-n-minus-k 2 16))

4 *** Lambda questions ***

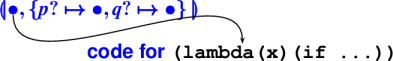

(define combine (p? q?)

(lambda (x) (if (p? x) (q? x) #f)))

(define divvy (p? q?)

(lambda (x) (if (p? x) #t (q? x))))

(val c-p-e (combine prime? even?))

(val d-p-o (divvy prime? odd?))

(c-p-e 9) == ? (d-p-o 9) == ?

(c-p-e 8) == ? (d-p-o 8) == ?

(c-p-e 7) == ? (d-p-o 7) == ?

*** Lambda answers ***

(define combine (p? q?)

(lambda (x) (if (p? x) (q? x) #f)))

(define divvy (p? q?)

(lambda (x) (if (p? x) #t (q? x))))

(val c-p-e (combine prime? even?))

(val d-p-o (divvy prime? odd?))

(c-p-e 9) == #f (d-p-o 9) == #t

(c-p-e 8) == #f (d-p-o 8) == #f

(c-p-e 7) == #f (d-p-o 7) == #t"Escape" means "outlive the function in which the lambda was evaluated."

Typically returned

More rarely, stored in a global variable or a heap-allocated data structure

We have already seen an example:

*** An ``escaping'' function ***

-> (define to-the-n-minus-k (n k)

(lambda (x) (- (exp x n) k)))Where are n and k stored???

Values that escape have to be allocate on the heap

C programmers use malloc to explicitly manage such values.

In a language with first-class, nested functions, storage of escaping values is part of the semantics of lambda.

Picture of activation record for to-the-n-minus-k with n and k being popped.

Closures represent escaping functions:

An example:

*** What's the closure for conjunction? ***

(define combine (p? q?)

(lambda (x) (if (p? x) (q? x) #f)))Closure for conjunction:

Preview: in math, what is the following equal to?

(f o g)(x) == ???Another algebraic law, another function:

(f o g) (x) = f(g(x))

(f o g) = \x. (f (g (x)))

*** Functions create new functions ***

-> (define o (f g) (lambda (x) (f (g x))))

-> (define even? (n) (= 0 (mod n 2)))

-> (val odd? (o not even?))

-> (odd? 3)

-> (odd? 4)Another example: (o not null?)

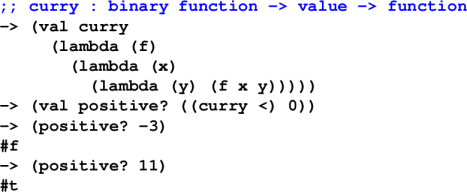

Currying converts a binary function f(x,y) to a function f' that takes x and returns a function f'' that takes y and returns the value f(x,y).

As we study higher-order functions in more detail, you will see why currying is useful.

*** Classic functional technique: Currying ***

-> (val positive? (lambda (y) (< 0 y)))

-> (positive? 3)

-> (val <-c (lambda (x) (lambda (y) (< x y))))

-> (val positive? (<-c 0)) ; "partial application"

-> (positive? 0)Curried functions take their arguments "one-at-a-time."

*** What's the algebraic law for `curry`? ***

... (curry f) ... = ... f ...

Keep in mind:

All you can do with a function is apply it!

(((curry f) x) y) = f (x, y)No need to Curry by hand!:

*** Exercises ***

-> (map ((curry +) 3) '(1 2 3 4 5))

???

-> (exists? ((curry =) 3) '(1 2 3 4 5))

???

-> (filter ((curry >) 3) '(1 2 3 4 5))

??? ; tricky

*** Answers ***

-> (map ((curry +) 3) '(1 2 3 4 5))

(4 5 6 7 8)

-> (exists? ((curry =) 3) '(1 2 3 4 5))

-> (filter ((curry >) 3) '(1 2 3 4 5))

(1 2) *** Bonus content: vulnerable variables? ***

-> (val seed 1)

-> (val rand (lambda ()

(set seed (mod (+ (* seed 9) 5) 1024)))))

-> (rand)

14

-> (rand)

131

-> (set seed 1)

1

-> (rand)

14Q: What's the problem with this approach?

A: The seed is exposed to the end user, who can break the abstraction of the rand function.

*** Bonus: Lambda as abstraction barrier! ***

-> (val mk-rand (lambda (seed)

(lambda ()

(set seed (mod (+ (* seed 9) 5) 1024))))))

-> (val rand (mk-rand 1))

-> (rand)

14

-> (rand)

131

-> (set seed 1)

error: set unbound variable seed

-> (rand)

160Q: Can you do case analysis on a function?

A: No!

Q: So what can you do then?

A: Apply it!

Recursive function consuming A is related to proof about A

Q: How to prove two lists are equal?

A: Prove they are both '() or that they are both cons cells cons-ing equal car's to equal cdr's

Q: How to prove two functions equal?

A: Prove that when applied to equal arguments they produce equal results.

Goal: Start with functions on elements, end up with functions on lists

Goal: Capture common patterns of computation or algorithms

exists? (Ex: Is there a number?)all? (Ex: Is everything a number?)filter (Ex: Take only the numbers)map (Ex: Add 1 to every element)Fold also called reduce, accum, a "catamorphism"

exists?Algorithm encapsulated: linear search

Example: Is there a even element in the list?

Algebraic laws:

(exists? p? '()) == ???

(exixts? p? '(cons a as)) == ???

(exists? p? '()) == #f

(exixts? p? '(cons a as)) == p? x or exists? p? xs

*** Defining exists? ***

-> (define exists? (p? xs)

(if (null? xs)

(if (p? (car xs))

(exists? p? (cdr xs)))))

-> (exists? pair? '(1 2 3))

-> (exists? pair? '(1 2 (3)))

-> (exists? ((curry =) 0) '(1 2 3))

-> (exists? ((curry =) 0) '(0 1 2 3))Filter:

filterAlgorithm encapsulated: Linear filtering

Example: Given a list of numbers, return only the even ones.

Algebraic laws:

(filter p? '()) == ???

(filter p? '(cons m ms)) == ???

(filter p? '()) == '()

(filter p? '(cons m ms)) == if (p? m)

(cons m (filter p? ms))

(filter p? ms)

*** Defining filter ***

-> (define filter (p? xs)

(if (null? xs)

'()

(if (p? (car xs))

(cons (car xs) (filter p? (cdr xs)))

(filter p? (cdr xs)))))

-> (filter (lambda (n) (> n 0)) '(1 2 -3 -4 5 6))

(1 2 5 6)

-> (filter (lambda (n) (<= n 0)) '(1 2 -3 -4 5 6))

(-3 -4)

-> (filter ((curry <) 0) '(1 2 -3 -4 5 6))

(1 2 5 6)

-> (filter ((curry >=) 0) '(1 2 -3 -4 5 6))

(-3 -4)

*** Composition Revisited: List Filtering ***

-> (val positive? ((curry <) 0))

<procedure>

-> (filter positive? '(1 2 -3 -4 5 6))

(1 2 5 6)

-> (filter (o not positive?) '(1 2 -3 -4 5 6))

(-3 -4)Map:

mapAlgorithm encapsulated: Transform every element

Example: Square every element of a list.

Algebraic laws:

(map f '()) == ???

(map f (cons n ns)) == ???

(map f '()) == '()

(map f (cons n ns)) == cons (f n) (map f ns)

*** Defining map ***

-> (define map (f xs)

(if (null? xs)

'()

(cons (f (car xs)) (map f (cdr xs)))))

-> (map number? '(3 a b (5 6)))

(#t #f #f #f)

-> (map ((curry *) 100) '(5 6 7))

(500 600 700)

-> (val square* ((curry map) (lambda (n) (* n n))))

<procedure>

-> (square* '(1 2 3 4 5))

(1 4 9 16 25)Foldr:

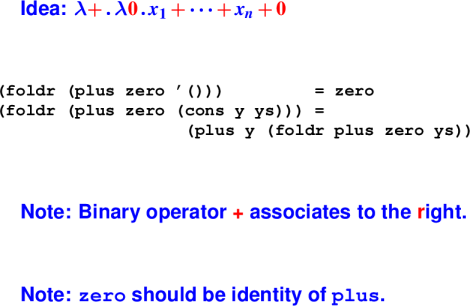

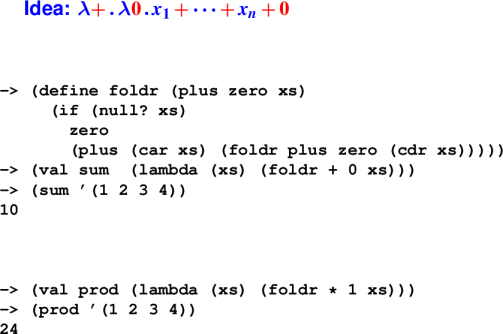

foldAlgebraic laws for foldr:

foldr takes two arguments:

zero: What to do with the empty list.

plus: How to combine next element with running results.

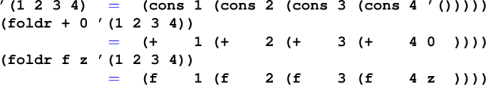

Example: foldr plus zero '(a b)

cons a (cons b '())

| | |

v v v

plus a (plus b zero)Code for foldr:

Another view of operator folding:

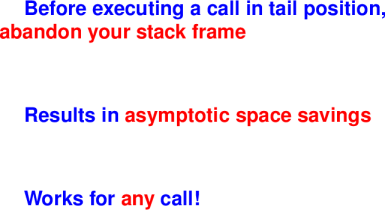

Intuition: In a function, a call is in tail position if it is the last thing the function will do.

A tail call is a call in tail position.

Important for optimizations: Can change complexity class.

What is tail position?

Tail position is defined inductively:

(if e1 e2 e3) is in tail position, so are e2 and e3(let (...) e) is in tail position, so is e, and similary for letrec and let*.(begin e1 ... en) is in tail position, so is en.Idea: The last thing that happens

Anything in tail position is the last thing executed!

Key idea is tail-call optimization!

Tail-call optimization:

Example of tail position:

Example of tail position:

Example: reverse '(1 2)

Question: How much stack space is used by the call?

Call stack:

reverse '()

append

reverse '(2)

append

reverse '(1 2)Answer: Linear in the length of the list

Another example of tail position:

Another example of tail position:

Example: revapp '(1 2) '()

Question: How much stack space is used by the call?

Call stack: (each line replaces previous one)

revapp '(1 2) '() --> revapp '(2) '(1) --> revapp '() '(2 1)

Answer: Constant

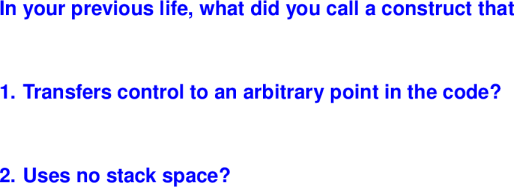

Question:

Answer: a goto!!

Think of "tail call" as "goto with arguments"

exists? all?filtermapfoldHOFs homework due Wednesday, Oct 12

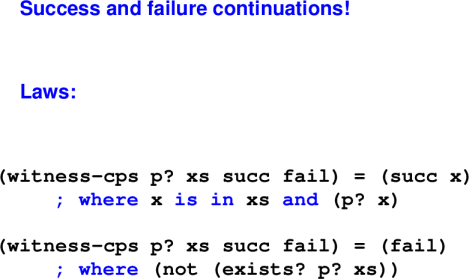

Continuations

A continuation is code that represents "the rest of the computation."

Direct style: Last action of a function is to return a value. (This style is what you are used to.)

Continuation-passing style (CPS): Last action of a function is to "throw" value to a continuation.

Compiler representation: Compilers for functional languages often convert direct-style user code to CPS because CPS matches control-flow of assembly.

Some languages provide a construct for capturing the current continuation and giving it a name k. Control can be resumed at captured continuation by throwing to k.

A style of coding that can mimic exceptions

Call-backs in GUI frameworks

First-class continuations require compiler support.

We're going to simulate continuations with function calls in tail position.

How functions finish:

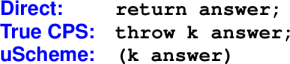

Design Problem: Missing Value:

Ideas?

Bad choices:

'failGood choice:

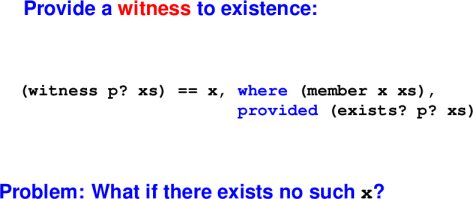

Solution: A New Interface:

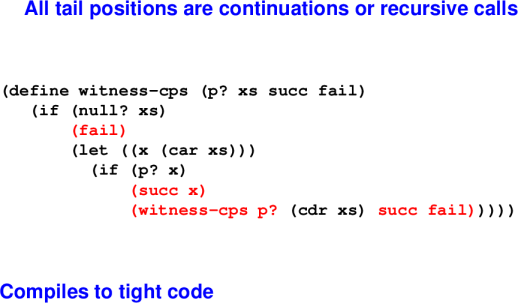

*** Coding \lit{witness} with continuations ***

(define witness-cps (p? xs succ fail)

(if (null? xs)

(fail)

(let ((x (car xs)))

(if (p? x)

(succ x)

(witness-cps p? (cdr xs) succ fail)))))``Continuation-Passing Style'':

Question: How much stack space is used by the call?

Answer: Constant

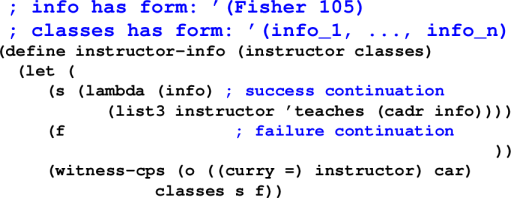

*** Example Use: Instructor Lookup ***

-> (val 2016f '((Fisher 105)(Hescott 170)(Chow 116)))

-> (instructor-info 'Fisher 2016f)

(Fisher teaches 105)

-> (instructor-info 'Chow 2016f)

(Chow teaches 116)

-> (instructor-info 'Souvaine 2016f)

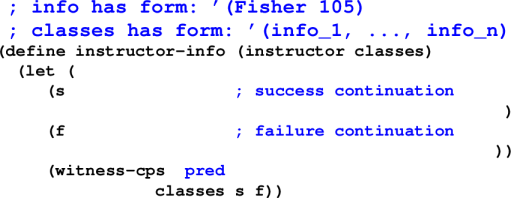

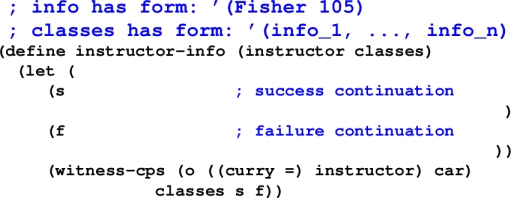

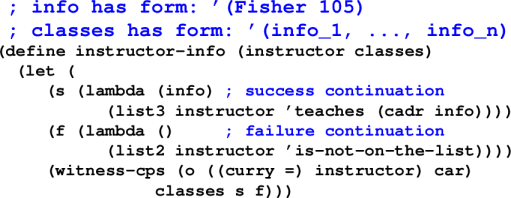

(Souvaine is-not-on-the-list)Instructor Lookup: The Code:

Instructor Lookup: The Code:

Instructor Lookup: The Code:

Instructor Lookup: The Code:

*** Exercise: Find a satisfying assignment if one exists ***

(val f1 '(and x y z w p q (not x)))

(val f2 '(not (or x y)))

(val f3 '(not (and x y z)))

(val f4 '(and (or x y z)

(or (not x) (not y) (not z))))Wait for it ...:

*** Satisfying assignments ***

(val f1 '(and x y z w p q (not x))) ; NONE

(val f2 '(not (or x y)))

; { x |-> #f, y |-> #f }

(val f3 '(not (and x y z)))

; { x |-> #f, ... }

(val f4 '(and (or x y z)

(or (not x) (not y) (not z))))

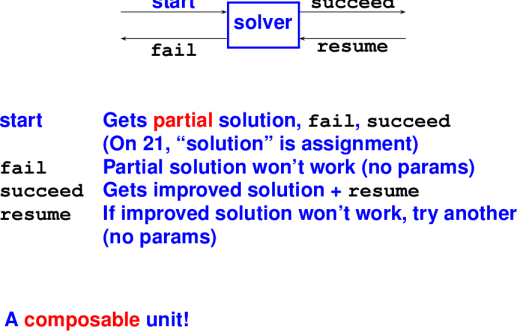

; { x |-> #f, y |-> #t, ... }Continuations for Search:

start carries a partial truth assignment to variables current

Box describes how to extend current to make a variable, say x, true.

Case 1: current(x) = #t

Call success continuation with current

Pass fail as resume continuation (argument to success)

Case 2: current(x) = #f

Call fail continuation

Case 3: x not in current

Call success cotinuation with current{x -> #t}

Pass fail as resume continuation

start carries a partial truth assignment to variables current

Box describes how to extend current to make a negated variable, say not x, true.

Case 1: current(x) = #f

Call success continuation with current

Pass fail as resume continuation (argument to success)

Case 2: current(x) = #t

Call fail continuation

Case 3: x not in current

Call success cotinuation with current{x -> #f}

Pass fail as resume continuation

Solver enters A

If A is solved, newly allocated success continuation starts B

If B succeeds, we're done! Use success continuation from context.

If B fails, use resume continuation A passed to B as fail.

If A fails, the whole thing fails. Use fail continuation from context.

Solver enters A

If A is solved, we're good! But what if context doesn't like solution? It can resume A using the resume continuation passed out as fail.

If A can't be solved, don't give up! Try a newly allocated failure continuation to start B.

If ever B is started, we've given up on A entirely. So B's success and failure continuations are exactly the ones in the context.

If B succeeds, but the context doesn't like the answer, the context can resume B.

If B fails, abject failure all around; call the original fail continuation.

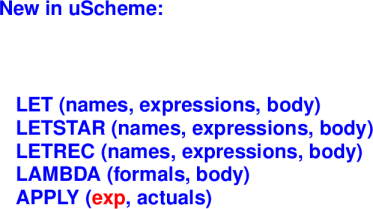

First four of five questions: Syntax, Values, Environments, Evaluation

Key changes from Impcore:

New constructs: let, lambda, application (not just names)

New values: cons cells and functions (closures)

A single environment

Environments get copied (in closures).

Environment maps names to mutable locations, not values.

A store maps locations to values.

{New Abstract Syntax}:

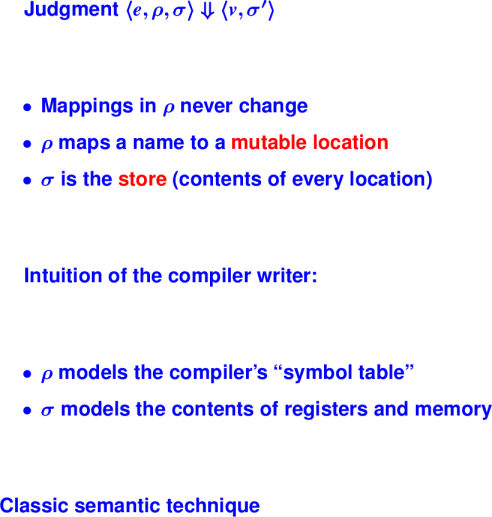

{New Evaluation Judgment}:

It's not precisely true that rho never changes.

New variables are added when they come into scope.

Old variables are deleted when they go out of scope.

But the location associated with a variable never changes.

The book includes all rules for uScheme. Here we will discuss on key rules.

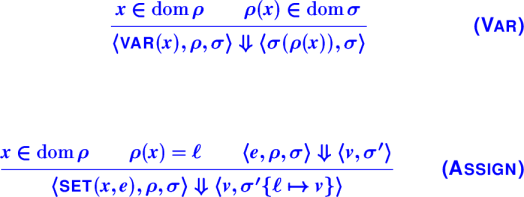

{New Evaluation Rules}:

Board: Picture of environment pointing to store.

Questions about Assign:

What changes are captured in σʹ?

What changes are captured in σʹ{ℓ ↦ v}?

What would happen if we used σ instead of σʹ

What would happen if we used a fresh ℓ?

Some other ℓ in the range of ρ?

{Semantics of Lambda}:

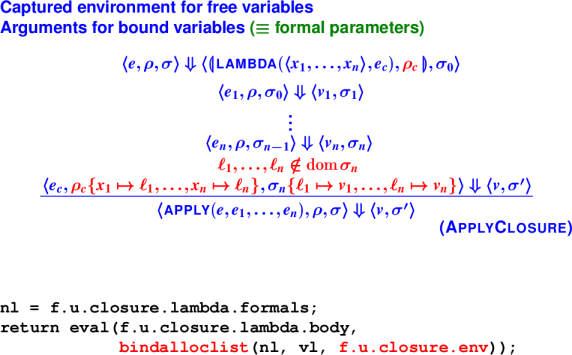

{Applying Closures}:

Questions about ApplyClosure:

What if we used σ instead of σ0 in evaluation of e1?

What if we used σ instead of σ0 in evaluation of arguments?

What if we used ρc instead of ρ in evaluation of arguments?

What if we did not require ℓ1, …, ℓn ∉ dom(σ)?

What is the relationship between ρ and σ?

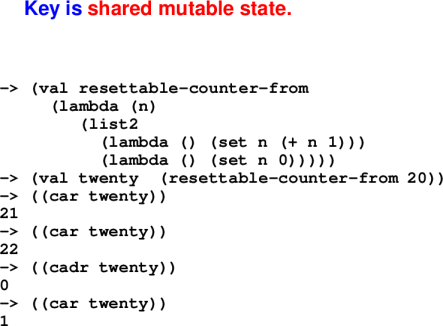

{Locations in Closures}:

Picture of environment and store that results from executing above program.

Closure Optimizations

Which let is which and why?

Recall:

let puts the new bindings in scope only for the body expression.let* adds each binding one at a time, so each binding is in scope for the later ones.letrec considers all the bindings to be mutually recursive.{uscheme and the Five Questions}:

Advantages:

Down sides:

Bottom line: it's all about lambda

Real Scheme: Macros

A Scheme program is just another S-expression

Function define-syntax manipulates syntax at compile time

Macros are hygienic---name clashes impossible

let, and, many others implemented as macros

*** Real Scheme: Conditionals ***

(cond (c1 e1) ; if c1 then e1

(c2 e2) ; else if c2 then e2

... ...

(cn en)) ; else if cn then en

; Syntactic sugar---'if' is a macro:

(if e1 e2 e3) == (cond (e1 e2)

(#t e3))Real Scheme: Mutation

Not only variables can be mutated.

Mutate heap-allocated cons cell:

(set-car! '(a b c) 'd) => (d b c)Circular lists, sharing, avoids allocation

Apply your new knowledge in Standard ML:

Lectures on ML:

Meta: Not your typical introduction to a new language

Designed for programs, logic, symbolic data

Theme: Precise ways to describe data

ML = uScheme + pattern matching + exceptions + static types

uScheme

(cons x xs)

'()

(lambda (x) e)

or and

#t #f

(let (x e1) e2)

ML

x :: xs

[] or nil

fn x => e

orelse andalso

true false

let val x = e1 in e2 end

Pattern matching facilitates case analysis.

Static types tell us at compile time what the cases are.

And lots of new concrete syntax!

The length function.

Algebraic laws:

length [] = 0

length (x::xs) = 1 + length xsThe code:

fun length [] = 0

| length (x::xs) = 1 + length xsThings to notice:

No brackets! (I hate the damn parentheses)

Function application by juxtaposition

Function application has higher precedence than any infix operator

Compiler checks all the cases (try in the interpreter)

Let's try another! map, filter, exists, all, take, drop, takewhile, dropwhile

*** Length ***

fun length [] = 0

| length (x::xs) = 1 + length xs

val res = length [1,2,3]

*** Map ***

fun map f [] = []

| map f (x::xs) = (f x) :: (map f xs)

val res1 = map length [[], [1], [1,2], [1,2,3]]

*** Filter ***

fun filter pred [] = []

| filter pred (x::xs) =

let val rest = filter pred xs in

if pred x

then (x::rest)

else rest

end

val res2 = filter (fn x => (x mod 2) = 0) [1,2,3,4]

(* Note: the convention of using a question mark in the

names of predicates doesn't work in SML. *)

*** Exists ***

fun exists pred [] = false

| exists pred (x::xs) =

(pred x) orelse (exists pred xs)

val res3 = exists (fn x => (x mod 2) = 1) [1,2,3,4]

(* Note fn x => e is syntax for lambda in SML *)

*** All ***

fun all pred [] = true

| all pred (x::xs) =

(pred x) andalso (all pred xs)

val res4 = all (fn x => (x >= 0)) [1,2,3,4]

*** Take ***

exception ListTooShort

fun take 0 l = []

| take n [] = raise ListTooShort

| take n (x::xs) = x::(take (n-1) xs)

val res5 = take 2 [1,2,3,4]

val res6 = take 3 [1] handle ListTooShort => (print "List too short!"; [])

(* Note use of exceptions. *)

*** Drop ***

fun drop 0 l = l

| drop n [] = raise ListTooShort

| drop n (x::xs) = (drop (n-1) xs)

val res7 = drop 2 [1,2,3,4]

val res8 = drop 3 [1] handle ListTooShort => (print "List too short!"; [])

*** Takewhile ***

fun takewhile p [] = []

| takewhile p (x::xs) =

if p x then (x::(takewhile p xs)) else []

fun even x = (x mod 2 = 0)

val res8 = takewhile even [2,4,5,7]

val res9 = takewhile even [3,4,6,8]

*** Drop while ***

fun dropwhile p [] = []

| dropwhile p (a as (x::xs)) =

if p x then (dropwhile p xs) else a

val res10 = dropwhile even [2,4,5,7]

val res11 = dropwhile even [3,4,6,8]

(* fancy pattern form: a as (x::xs) *

*** Fold ***

fun foldr p zero [] = zero

| foldr p zero (x::xs) = p (x, (foldr p zero xs))

fun foldl p zero [] = zero

| foldl p zero (x::xs) = foldl p (p (x, zero)) xs

val res12 = foldr (op +) 0 [1,2,3,4]

val res13 = foldl (op * ) 1 [1,2,3,4]

(* Note op to convert an infix operator into a function *)ML---The Five Questions

Syntax: definitions, expressions, patterns, types

Values: num/string/bool, record/tuple, algebraic data

Environments: names stand for values (and types)

Evaluation: uScheme + case and pattern matching

Initial Basis: medium size; emphasizes lists

(Question Six: type system---a coming attraction)

A note about books

Ullman is easy to digest

Ullman is clueless about good style

Suggestion:

Tidbits:

The most important idea in ML!

Originated with Hope (Burstall, MacQueen, Sannella), in the same lab as ML, at the same time (Edinburgh!)

Board:

A "suit" is produced using hearts, diamonds, clubs, or spades

A "list of A" is produced using nil or a :: as, where a is an A and as is a "list of A"

A "heap of A" is either empty or it's an A and two child heaps

*** Datatype declarations ***

datatype suit = hearts | diamonds | clubs | spades

datatype 'a list = nil (* copy me NOT! *)

| op :: of 'a * 'a list

datatype 'a heap = EHEAP

| HEAP of 'a * 'a heap * 'a heap

type suit val hearts : suit, ...

type 'a list val nil : forall 'a . 'a list

val op :: : forall 'a .

'a * 'a list -> 'a list

type 'a heap

val EHEAP: forall 'a. 'a heap

val HEAP : forall 'a.'a * 'a heap * 'a heap -> 'a heapExegesis (on board):

Notation 'a is a type variable

Name before = introduces a new type constructor into the type environment. Type constructors may be nullary.

Alternatives separated by bars are value constructors of the type

They are new and hide previous names

(Do not hide built-in names nil and list from the initial basis!)

Value constructors participate in pattern matching

Complete by themselves: hearts, spades, nil

Expect parameters to make a value or pattern: ::, HEAP

op enables an infix operator to appear in a nonfix context

Type application is postfix

int list listNew names into two environments:

suit, list, heap stand for new type constructors

hearts, clubs, nil, ::, EHEAP, HEAP stand for new value constructors

Algebraic datatypes are inherently inductive (list appears in its own definition)---to you, that means finite trees

'a * 'a list is a pair type --- infix operators are always applied to pairs

Datatypes can define an enumerated type and associated values.

datatype suit = heart | diamond | spade | clubHere suit is the name of a new type.

The data constructors heart, dimaond, spade, and club are the values of type suit.

Data constructors are separated by vertical bars.

Datatypes are deconstructed using pattern matching.

fun toString heart = "heart"

| toString diamond = "diamond"

| toString spade = "spade"

| toString club = "club"

val suitName = toString heartdatatype IntTree = Leaf | Node of int * IntTree * IntTreeIntTree is the name of a new type.

There are two data constructors: Leaf and Node.

Nodes take a tuple of three arguments: a value at the node, and left and right subtrees.

The keyword of separates the name of the data constructor and the type of its argument.

When fully applied, data constructors have the type of the defining datatype (ie, IntTree).

We build values of type IntTree using the associated constructors: (Draw on board)

val tempty = Leaf

val t1 = Node (1, tempty, tempty)

val t2 = Node (2, t1, t1)

val t3 = Node (3, t2, t2)What is the in-order traversal of t3?

[1,2,1,3,1,2,1]What is the pre-order traversal of t3?

[3,2,1,1,2,1,1](The @ symbol denotes append in ML)

fun inOrder Leaf = []

| inOrder (Node (v, left, right)) =

(inOrder left) @ [v] @ (inOrder right)

val il3 = inOrder t3

fun preOrder Leaf = []

| preOrder (Node (v, left, right)) =

v :: (preOrder left) @ (preOrder right)

val pl3 = inOrder t3IntTree is monomorphic because it has a single type.

Note though that the inOrder and preOrder functions only cared about the structure of the tree, not the payload value at each node.

Polymorphic datatypes are written using type variables that can be instantiated with any type.

datatype 'a tree = Child | Parent of 'a * 'a tree * 'a treetree is a type constructor (written in post-fix notation), which means it produces a type when applied to a type argument.

Examples:

int tree is a tree of integers

bool tree is a tree of booleans

char tree is a tree of characters

int list tree is a tree of a list of integers.

'a is a type variable: it can represent any type.

It is introduced on the left-hand of the = sign. References on the right-hand side are types.

Child and Parent are data constructors.

Child takes no arguments, and so has type 'a tree

When given a value of type 'a and two 'a trees, Parent produces a 'a tree

tree valuesval empty = Child

val tint1 = Parent (1, empty, empty)

val tint2 = Parent (2, tint1, tint1)

val tint3 = Parent (3, tint2, tint2)

val tstr1 = Parent ("a", empty, empty)

val tstr2 = Parent ("b", tstr1, tstr1)

val tstr3 = Parent ("c", tstr2, tstr2)tree valuesfun inOrder Child = []

| inOrder (Parent (v, left, right)) =

(inOrder left) @ [v] @ (inOrder right)

fun preOrder Child = []

| preOrder (Parent (v, left, right)) =

v :: (preOrder left) @ (preOrder right)Functions inOrder and preOrder are polymorphic: they work on any value of type 'a tree. 'a is a type variable and can be replaced with any type.

Datatype declarations introduce names into:

the type environment: suit, IntTree, tree

the value environment: heart, Leaf, Parent

Datatype declarations are inherently inductive:

the type IntTree appears in its own definition

the type tree appears in its own definition

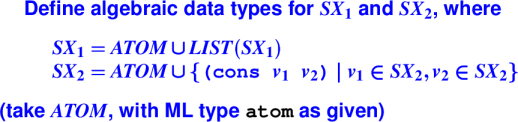

Wait for it ...

*** Exercise answers ***

datatype sx1 = ATOM1 of atom

| LIST1 of sx1 list

datatype sx2 = ATOM2 of atom

| PAIR2 of sx2 * sx2Eliminate values of algebraic types

New language construct case (an expression)

fun length xs =

case xs

of [] => 0

| (x::xs) => 1 + length xsAt top level, fun better than case

When possible, write

fun length [] = 0

| length (x::xs) = 1 + length xscase works for any datatype

fun toStr t =

case t

of Leaf => "Leaf"

| Node(v,left,right) => "Node"But often pattern matching is better style:

fun toStr' Leaf = "Leaf"

| toStr' (Node (v,left,right)) = "Node"Part of learning any new field: talk to people in their native vocabulary

Introduce means "produce", "create", "make", "define"

Eliminate means "consume", "examine", "observe", "use"

It's like knowing what to say when somebody sneezes.

Types and their uses:| Type | Produce | Consume |

| Introduce | Eliminate | |

| arrow (function) | Function definition or Lambda (fn) | Application |

| algebraic datatype | Apply constructor | Case or Pattern match |

| tuple | (e1, ..., en) | Case or Pattern match! |

val (x,y) = (1,2)

val (left, pivot, right) = split xs

val (n,xs) = (3, [1,2,3])

val (x::xs) = [1,2,3]

val (_::xs) = [1,2,3]exception EmptyQueueraise e where e : exne1 handle pat => e2Handler uses pattern matching

e handle pat1 => e1 | pat2 => e2

*** Exception handling in action ***

loop (evaldef (reader (), rho, echo))

handle EOF => finish ()

| Div => continue "Division by zero"

| Overflow => continue "Arith overflow"

| RuntimeError msg => continue ("error: " ^ msg)

| IO.Io {name, ...} => continue ("I/O error: " ^

name)

| SyntaxError msg => continue ("error: " ^ msg)

| NotFound n => continue (n ^ "not found")ML Traps and pitfalls:

*** Order of clauses matters ***

fun take n (x::xs) = x :: take (n-1) xs

| take 0 xs = []

| take n [] = []

(* what goes wrong? *)

*** Gotcha --- overloading ***

- fun plus x y = x + y;

> val plus = fn : int -> int -> int

- fun plus x y = x + y : real;

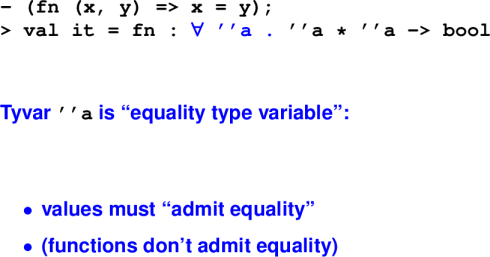

> val plus = fn : real -> real -> realGotcha --- equality types:

Gotcha --- parentheses

Put parentheses around anything with |

case,handle,fn

Function application has higher precedence than any infix operator

*** Syntactic sugar for lists ***

- 1 :: 2 :: 3 :: 4 :: nil; (* :: associates to the right *)

> val it = [1, 2, 3, 4] : int list

- "the" :: "ML" :: "follies" :: [];

> val it = ["the", "ML", "follies"] : string list

> concat it;

val it = "theMLfollies" : stringML from 10,000 feet:

The value environment

Names bound to immutable values

Immutable

refandarrayvalues point to mutable locations

ML has no binding-changing assignment

Definitions add new bindings (hide old ones):

valpattern=expval recpattern=expfunident patterns=expdatatype... = ...

Nesting environments

At top level, definitions

Definitions contain expressions:

def ::=

valpattern=exp

Expressions contain definitions:

exp ::=

letdefsinexpend

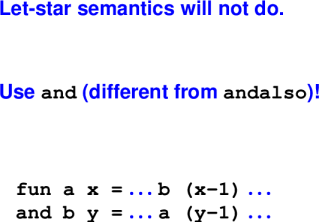

Sequence of defs has let-star semantics

What is a pattern?

pattern ::= variable

| wildcard

| value-constructor [pattern]

| tuple-pattern

| record-pattern

| integer-literal

| list-patternDesign bug: no lexical distinction between

Workaround: programming convention

Function pecularities: 1 argument

Each function takes 1 argument, returns 1 result

For "multiple arguments," use tuples!

fun factorial n =

let fun f (i, prod) =

if i > n then prod else f (i+1, i*prod)

in f (1, 1)

end

fun factorial n = (* you can also Curry *)

let fun f i prod =

if i > n then prod else f (i+1) (i*prod)

in f 1 1

endTuples are "usual and customary."

Mutual recursion:

Type systems

Typing rules for a simple language

Type checker for a simple language

Adding environments

What kind of value do we have?

Type systems classify values.

n + 1 : int

"hello" ^ "world" : string

(fn n => n * (n - 1)) : int -> int

if p then 1 else 0 : int if p : boolQuestions type systems can answer:

What kind of value does it evaluate to (if it terminates)?

What is the contract of the function?

Does this program contain certain kinds of errors?

Who has the rights to look at it/change it?

Is the number miles or millimeters?

Questions type systems generally cannot answer:

Will my program contain a division by zero?

Will my program contain an array bounds error?

Will my program take the car of `'()?

Will my program terminate?

Suppose L is a Turing-Complete Language.

TP is the set of programs in L that terminate.

Wish: a type system to statically classify terminating programs:

Expression e in L has type T (e : T) iff e terminates.

But: Undecideable!

We can prove no such type system exists.

Choices:

Allow type checker to run forever.

Don't use type system to track termination.

Most languages use a combination of static and dynamic checks

Static: "for all inputs"

input independent

efficient at run-time

approximate : rules out some programs that won't trigger errors example: (if false then 2 else "hi") ^ "there"

Dynamic: "for some inputs"

depends on input

run-time overhead

precise

As a working definition, a set of values

As a precise definition, a classifier for terms!!

Source of new language ideas for next 20 years

Needed if you want to understand advanced designs (or create your own)

Define an AST for expressions with:

Language of expressions

Numbers and Booleans:

datatype exp = ARITH of arithop * exp * exp

| CMP of relop * exp * exp

| LIT of int

| IF of exp * exp * exp

and arithop = PLUS | MINUS | TIMES | ...

and relop = EQ | NE | LT | LE | GT | GE

datatype ty = INTTY | BOOLTYCan't add an integer and a boolean:

3 + (3 < 99)

(ARITH(PLUS, LIT 3, CMP (LT, LIT 3, LIT 99)))Can't compare an integer and a boolean

(3 < (4 = 24))

CMP (LT, LIT 3, CMP(EQ (LIT 4, LIT 24)))Form of judgment Context |- term : type

Written |- e : tau

Contexts vary between type systems

(Right now, the empty context)

Inference rules determine how to code val tc : exp -> ty:

Given e, find tau such that |- e : tau

What inference rules do you recommend for this language?

Informal example:

|- 3 : int |- 5 : int

------------------------------------------------------------

|- 3 + 5 : intRules out:

|- 'a' : char |- 5 : int

------------------------------------------------------------

|- 'a' + 5 : ???General form:

|- e1 : int |- e2 : int

------------------------------------------------------------

|- ARITH ( _ , e1, e2) : intInformal example:

|- 7 : int |- 10 : int

------------------------------------------------------------

|- 7 < 10 : boolGeneral form:

|- e1 : int |- e2 : int

------------------------------------------------------------

|- CMP ( _ , e1, e2) : boolInformal example:

|- 14 : intGeneral form:

-----------------------------------

|- LIT (n) : intInformal example:

|- true : bool

|- 3 : int

|- 42 : int

------------------------------------------------------------

|- IF (true, 3, 42) : intGeneral form:

|- e : bool

|- e1 : tau1

|- e2 : tau2 tau1 equiv tau2

------------------------------------------------------------

|- IF ( e, e1, e2) : tau1Experience shows it is better to test two types for equivalence than to write rule with same type appearing twice.

Typing rules let us read off what a type checker needs to do.

input to checker: e

output from checker: tau

val tc : exp -> ty

exception IllTyped

fun tc (ARITH (_, e1, e2)) =

case (tc e1, tc e2)

of (INTTY, INTTY) => INTTY

| _ => raise IllTyped

| tc (CMP (_, e1, e2)) =

case (tc e1, tc e2)

of (INTTY, INTTY) => BOOLTY

| _ => raise IllTyped

| tc (LIT _) = INTTY

| tc (IF (e,e1,e2)) =

case (tc e, tc e1, tc e2)

of (BOOLTY, tau1, tau2) =>

if eqType(tau1, tau2)

then tau1 else raise IllTyped

| _ => raise IllTyped

An implementor's trick: If you see identical types in a rule,

Give each type a distinct subscript

Introduce equality constraints

Remember to be careful using primitive equality to check types---you are better off with eqType.

Add variables and let binding to our language, what happens?

Extended language of expressions

Numbers and Booleans:

datatype exp = ARITH of arithop * exp * exp

| CMP of relop * exp * exp

| LIT of int

| IF of exp * exp * exp

| VAR of name

| LET of name * exp * exp

and arithop = PLUS | MINUS | TIMES | ...

and relop = EQ | NE | LT | LE | GT | GE

datatype ty = INTTY | BOOLTYWhat could go wrong with a variable?

Used inconsistently:

;; x can't be both an integer and a list

x + x @ x

;; y can't be both an integer and a string

let y = 10 in y ^ "hello" end

Need to track variable use to ensure consistency

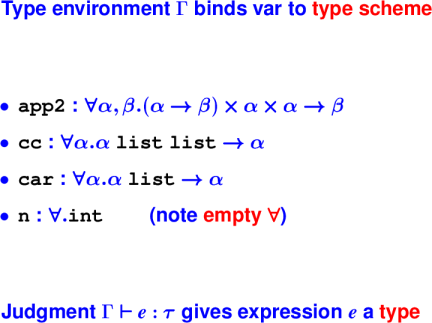

Key idea: Type environment (Gamma) tracks the types of variables.

x in domain Gamma tau = Gamma(x)

------------------------------------------------------------

Gamma |- VAR x : tauGamma |- e : tau

Gamma{x->tau} |- e' : tau'

------------------------------------------------------------

Gamma |- LET x = e in e' : tau'Type checker needs Gamma -- gives type of each "term variable".

val tc : ty env -> exp -> ty

fun tc Gamma (ARITH ... ) = <as before>

| tc Gamma (VAR x) =

case Gamma (x)

of Some tau => tau

| None => raise IllTyped

| tc Gamma (LET x, e1, e2) =

let tau1 = tc Gamma e1

in tc (extend Gamma x tau1) e2

end Introduction:

Gamma{x->tau1} |- e : tau2

------------------------------------------------------------

Gamma |- fn x : tau1 => e : tau1 -> tau2Elimination:

Gamma |- e : tau1 -> tau2

Gamma |- e1 : tau1

------------------------------------------------------------

Gamma |- e e1 : tau2Review

I gave you syntax for simple language

You came up with typing rules

I showed you how to implement the type checker.

Then on your homework,

You will design new syntax and typing rules for lists

You will extend an existing type checker

You will implement a full type checker from scratch

This is a big chunk of what language designers do.

Midterm review

Sample Problems

Course Evaluations

Plan on:

Writing some code uScheme and ML code

Reasoning about code (uScheme or ML)

Working with semantics

Recursion and Induction

Write recursive functions

Prove properties using induction

Algebraic laws can lead naturally to recursive functions and inductive proofs

Understanding a language: Key Questions

What is the abstract syntax?

What are the values?

What are the environments?

How does evaluation happen?

What is the initial basis?

What are the types?

First-class functions

What they are

How to use them effectively

Lambdas create anonymous functions

Closures are run-time representation of functions; they capture the environment at closure-definition time

Continuations capture the rest of the computation

Local bindings

Various forms: let, let*, and letrec

What is let used for?

How do the various forms differ?

Data structures and associated operations

S-expressions ((), null?, cons, car, cdr, ...)

Lists

Tuples

Cost Models and Optimizations

How many cons cells?

How many activation records?

Method of accumulating parameters

Tail calls

Operational semantics

Precisely describe meaning of programs

What value does a program evaluate to?

What side effects are caused in the process?

Written using inference rules

Judgement forms capture all relevant information

Environments track information about variables

Stores map locations to values

ML

Datatypes

Declarations introduce type constructor & data constructors

Datatypes can be recursive

Type variables allow polymorphic data structures

ML Pattern Matching

Deconstruct values: datatypes, lists, tuples, ...

Bind variables

Appear in function definitions, case expressions, and let bindings

ML Exceptions

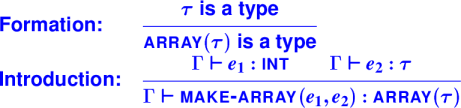

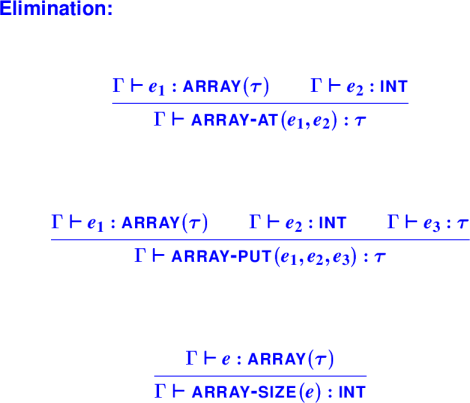

Type checking with type constructors

Formation, Introduction, and Elimination Rules

New watershed in the homework

You've been developing and polishing programming skills: recursion, higher-order functions, using types to your advantage. But the problems have been mostly simple problems around simple data structures, mostly lists.

We're now going to shift and spend the next several weeks doing real programming-languages stuff, starting with type systems.

You've already seen everything you need to know to implement a basic type checker, and you are almost fully equipped to add array operations and types to Typed Impcore.

What's next is much more sophisticated type systems, with an infinite number of types. We'll focus on two questions about type systems:

What is and is not a good type, that is, a classifier for terms?

How shall we represent types?

We'll look at these questions in two contexts: monomorphic and polymorphic languages.

Monomorphic types have "one shape."

int, bool, int -> bool, int * intPolymorphic types have "many shapes."

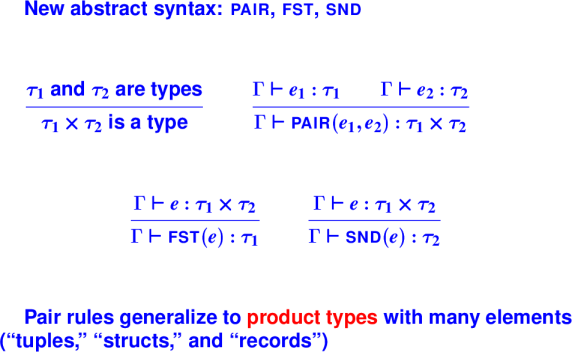

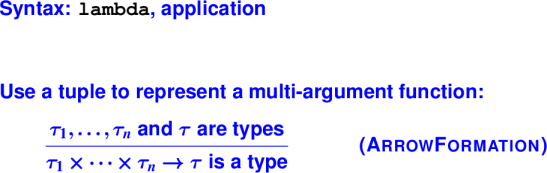

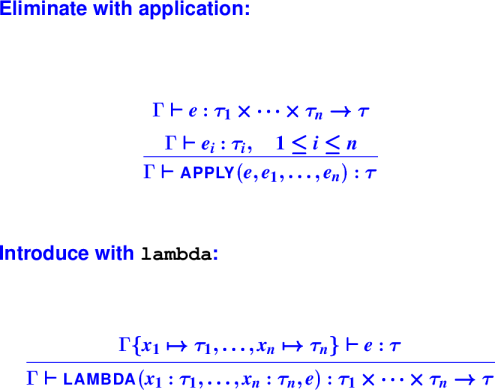

'a list, 'a list -> 'a list, ('a * int)Language designer's agenda:

What new types do I have (formation rules)?

What new syntax do I have to create terms of a type (introduction rules)?

What new syntax do I have to observe terms of a type (elimination rules)?

Here's how it works:

Every new variety of type requires special syntax

We get three kinds of typing rules: formation, introduction, and elimination

Implementation is a straightforward application of what you already know.

Question: If I add lists to a language, how many new types am I introducing?

Examples: Well-formed types

These are types:

intboolint * boolint * int -> intExamples: Not yet types, or not types at all

These "types in waiting" don't classify any terms

list (but int list is a type)array (but char array is a type)ref (but (int -> int) ref is a type)These are utter nonsense

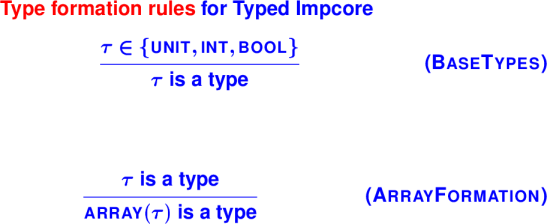

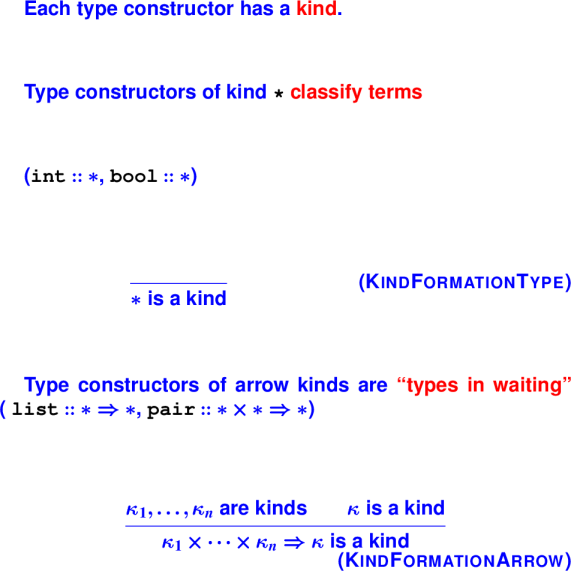

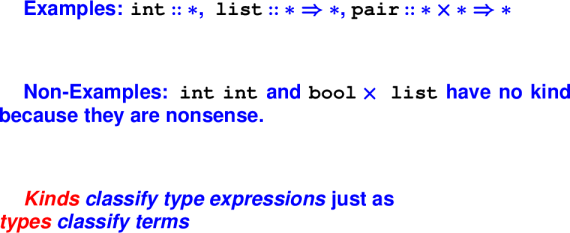

int intbool * arrayType formation rules

We need a way to classify type expressions into:

types that classify terms

type constructors that build types

nonsense terms that don't mean anything

Type constructors

Technical name for "types in waiting"

Given zero or more arguments, produce a type:

int, bool, char also called base typeslist, array, ref->More complex type constructors:

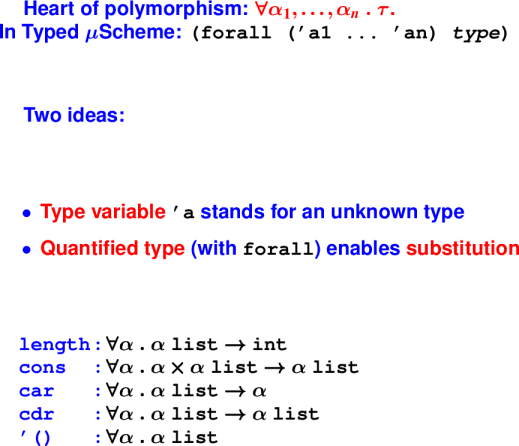

What's a good type?:

Type judgments for monomorphic system

Two judgments:

Product types: Both x and y:

(At run time, identical to cons, car, cdr)

Arrow types: Function from x to y:

Arrow types: Function from x to y:

Typical syntactic support for types

Explicit types on lambda and define:

For lambda, argument types:

(lambda ([n : int] [m : int]) (+ (* n n) (* m m)))For define, argument and result types:

(define int max ([x : int] [y : int])

(if (< x y) y x))Abstract syntax:

datatype exp = ...

| LAMBDA of (name * tyex) list * exp

...

datatype def = ...

| DEFINE of name * tyex * ((name * tyex) list * exp)

...Array types: Array of x:

Array types continued:

*** Type-checking LAMBDA ***

datatype exp = LAMBDA of (name * tyex) list * exp

...

fun ty (Gamma, LAMBDA (formals, body)) =

let val Gamma' = (* body gets new env *)

foldl (fn ((n, ty), g) => bind (n, ty, g))

Gamma formals

val bodytype = ty(Gamma', body)

val formaltypes =

map (fn (n, ty) => ty) formals

in funtype (formaltypes, bodytype)

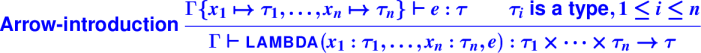

endType formation: Composing types

Typed Impcore:

Closed world (no new types)

Simple formation rules

Standard ML:

Open world (programmers create new types)

How are types constructed (from other types)?

Can't add new syntactic forms and new type formation rules for every new type.

Representing type constructors generically:

Question: How would you represent an array of pairs of booleans?:

Question: How would you represent an array of pairs of booleans?:

Well-formed types

We still need to classify type expressions into:

types that classify terms (eg, int)

type constructors that build types (eg, list)

nonsense terms that don't mean anything (eg, int int)

Idea:

We use types to classify expressions.

Apply the same idea to classify types.

Kinds are to types as types are to expressions.

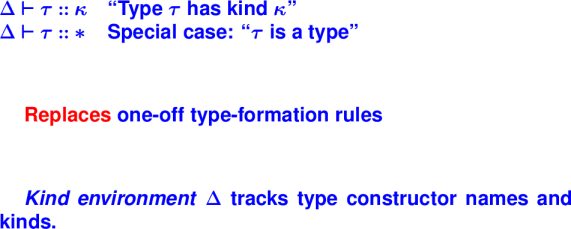

Type formation through kinds:

Use kinds to give arities:

The kinding judgment:

Kinding rules for types:

Monomorphic types are limiting

Each new type constructor requires

Monomorphism hurts programmers too

Monomorphism leads to code duplication

User-defined functions are monomorphic:

(define int lengthI ((list int) l)

(if (null? l) 0 (+ 1 (lengthI (cdr l)))))

(define int lengthB ((list bool) l)

(if (null? l) 0 (+ 1 (lengthB (cdr l)))))

(define int lengthS ((list sym) l)

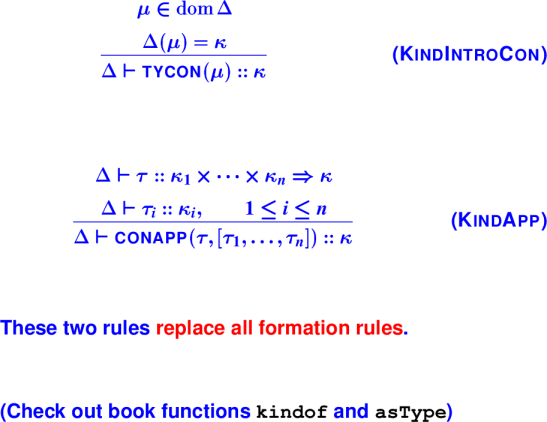

(if (null? l) 0 (+ 1 (lengthS (cdr l)))))Quantified types:

Representing quantified types

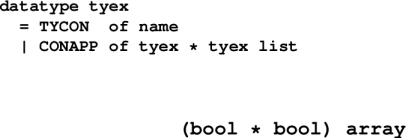

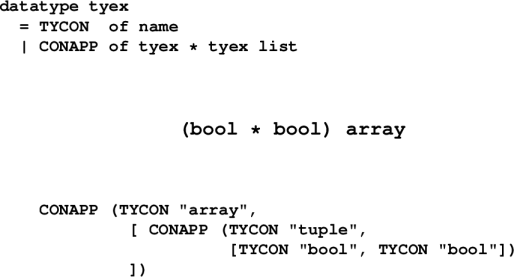

Two new alternatives for tyex:

datatype tyex

= TYCON of name

| CONAPP of tyex * tyex list

| FORALL of name list * tyex

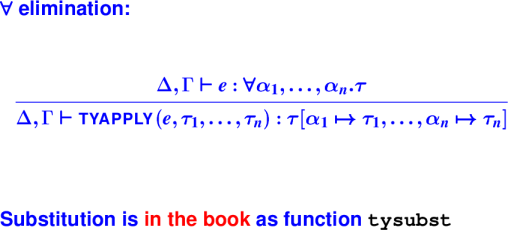

| TYVAR of nameKinding rules for quantified types:

*** Programming with these types ***

Substitute for quantified variables

-> length

<procedure> : (forall ('a) ((list 'a) -> int))

-> (@ length int)

<procedure> : ((list int) -> int)

-> (length '(1 2 3))

type error: function is polymorphic; instantiate before applying

-> ((@ length int) '(1 2 3))

3 : int

*** Substitute what you like ***

-> length

<procedure> : (forall ('a) ((list 'a) -> int))

-> (@ length bool)

<procedure> : ((list bool) -> int)

-> ((@ length bool) '(#t #f))

2 : int

*** More ``Instantiations'' ***

-> (val length-int (@ length int))

length-int : ((list int) -> int)

-> (val cons-bool (@ cons bool))

cons-bool : ((bool (list bool)) ->

(list bool))

-> (val cdr-sym (@ cdr sym))

cdr-sym : ((list sym) -> (list sym))

-> (val empty-int (@ '() int))

() : (list int)Bonus instantiation:

-> map

<procedure> :

(forall ('a 'b)

(('a -> 'b) (list 'a) -> (list 'b)))

-> (@ map int bool)

<procedure> :

((int -> bool) (list int) -> (list bool))Create your own!

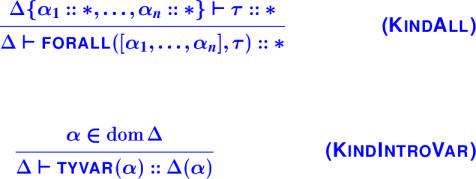

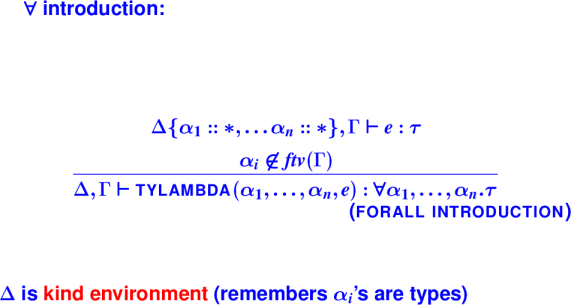

Abstract over unknown type using type-lambda

-> (val id (type-lambda ['a]

(lambda ([x : 'a]) x )))

id : (forall ('a) ('a -> 'a))'a is type parameter (an unknown type)

This feature is parametric polymorphism

Two forms of abstraction:

| term | type |

lambda

|

function (arrow)

|

type-lambda

|

forall

|

Power comes at notational cost

Function composition

-> (val o (type-lambda ['a 'b 'c]

(lambda ([f : ('b -> 'c)]

[g : ('a -> 'b)])

(lambda ([x : 'a]) (f (g x))))))

o : (forall ('a 'b 'c)

(('b -> 'c) ('a -> 'b) -> ('a -> 'c)))Aka o :

Instantiate by substitution:

Generalize with type-lambda:

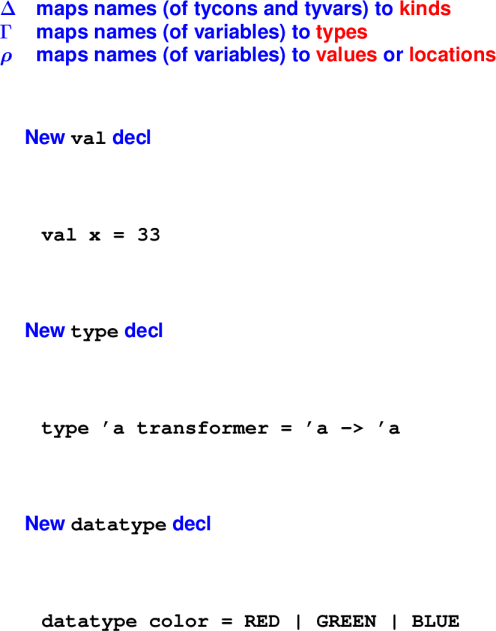

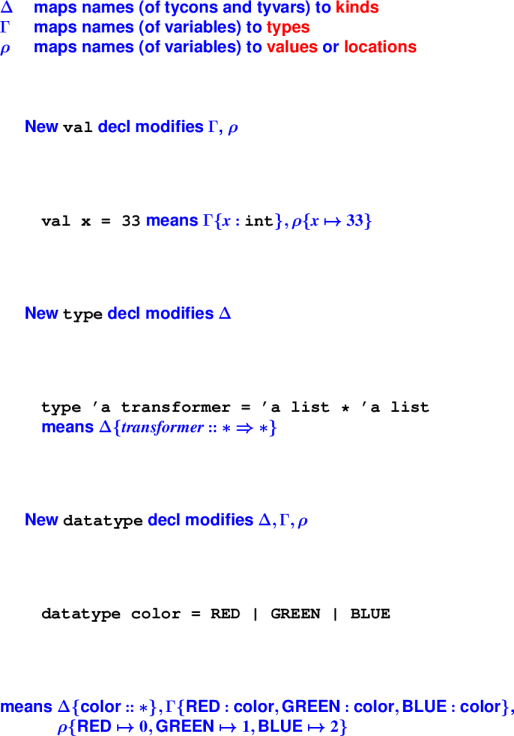

*** A phase distinction embodied in code ***

-> (val x 3)

3 : int

-> (val y (+ x x))

6 : int

fun checkThenEval (d, (delta, gamma, rho)) =

let val (gamma', tystring) = elabdef (d, gamma, delta)

val (rho', valstring) = evaldef (d, rho)

val _ = print (valstring ^ " : " ^ tystring)

in (delta, gamma', rho')

endThree environments --- what happens?:

Three environments revealed:

Exercise: Three environments:

Final: Thursday, December 15, 8:30 to 10:30.

Send email to comp105-grades@cs.tufts.edu if you have another exam at the same time.

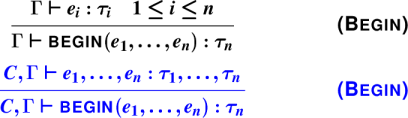

Type Inference Intuition

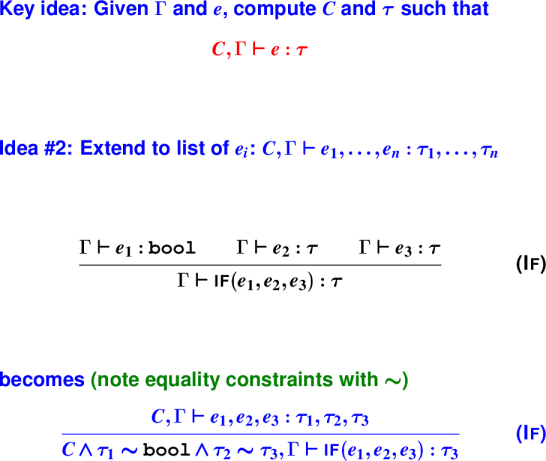

Formalization

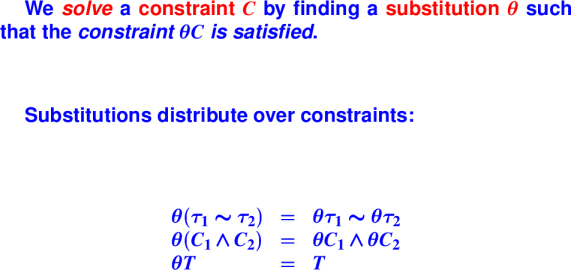

Constraints!

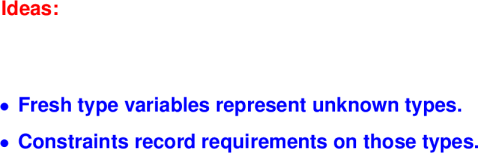

Fresh type variables represent unknown types.

Constraints record knowledge about type variables.

Useful in its own right (as we'll see shortly)

Canonical example of Static Analysis

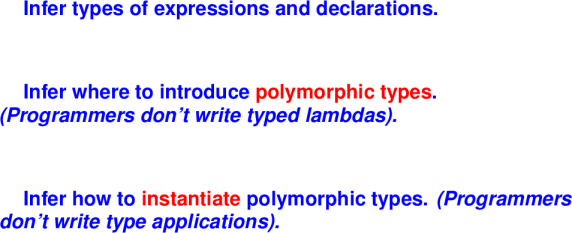

New topic: Type inference:

*** What type inference accomplishes ***

-> (define double (x) (+ x x))

double ;; uScheme

-> (define int double ([x : int]) (+ x x))

double : (int -> int) ;; Typed uSch.

-> (define double (x) (+ x x))

double : int -> int ;; nMLThe compiler tells you useful information and there is a lower annotation burden.

*** What else type inference accomplishes ***

-> ((@ cons bool) #t ((@ cons bool) #f (@ '() bool)))

(#t #f) : (list bool) ;; typed uScheme

-> ( cons #t ( cons #f '() ))

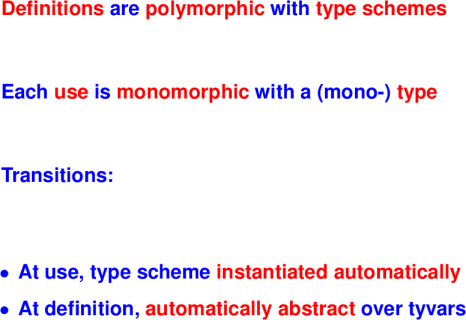

(#t #f) : bool list ;; nMLKey ideas:

For each unknown type, introduce a fresh type variable

Collect and enforce equality constraints

Introduce polymorphism at let/val bindings

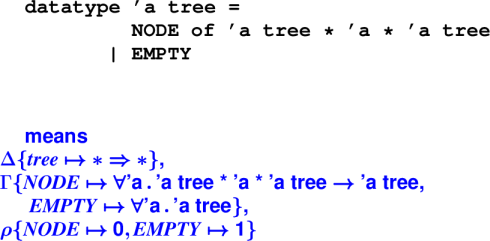

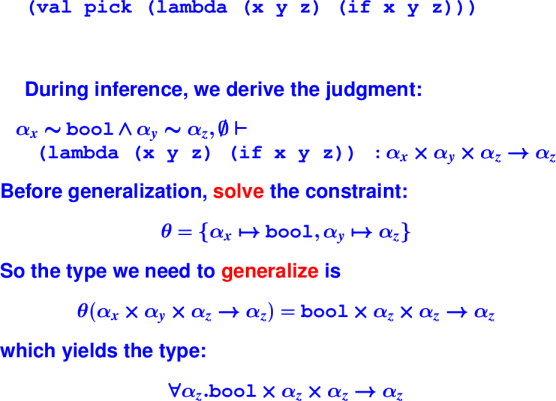

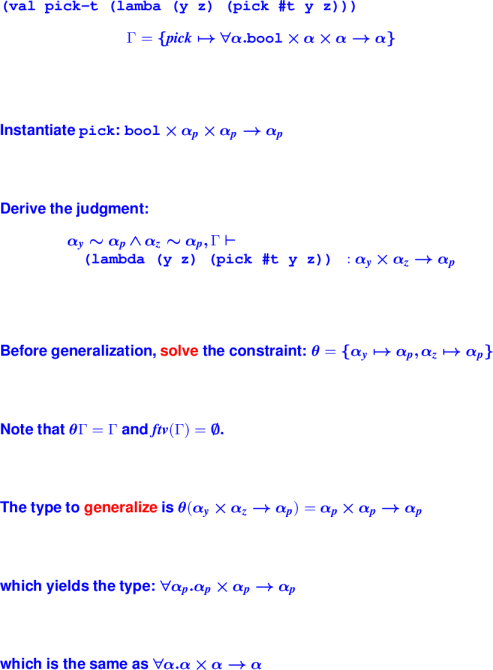

{Examples}:

Let's do an example on the board

(val-rec double (lambda (x) (+ x x)))What do we know?

double has type ʹa1

x has type ʹa2

+ has type int * int -> int

(+ x x) is an application, what does it require?

int and ʹa2 = intIs this possible?

Key idea: Record the constraint in a typing judgement.

'a2 = int /\ 'a2 = int, { double : 'a1, x : 'a2 } |- (+ x x) : intExample: if

(if y 1 0)

y has type ʹa3, 1 has type int, 0 has type int

Requires what constraints? (int = int, ʹa3 = bool`)

Example:

(if z z (- 0 z))

z has type ʹa3, 0 has type int, - has type int * int -> int

Requires what constraints? (ʹa3 = bool /\ int = int /\ ʹa3 = int)

Is this possible?

Why not?

let val app2 = (lambda (f x y)

(begin

(f x)

(f y)))Assume f : 'a_f

Assume x : 'a_x

Assume y : 'a_y

f x implies 'a_f = 'a_x -> 'a

f y implies 'a_f = 'a_y -> 'a'

Together, these constraints imply 'a_x = 'a_y and 'a = 'a'

begin implies result of function is 'a

So, app2 : ('a_x -> 'a) * 'a_x * 'a_x -> 'a

'a_x and 'a aren't mentioned anywhere else in program, so

we can generalize to:

forall 'a_x, 'a . ('a_x -> 'a) * 'a_x * 'a_x -> 'a

which is the same thing as:

forall 'a, 'b . ('a -> 'b) * 'a * 'a -> 'b

*** Exercise: Give the type of cc ***

let val cc = (lambda (nss) (car (car nss)))Assume nss : 'b

We know car : forall 'a . 'a list -> 'a

=> car_1 : 'a1 list -> 'a1

=> car_2 : 'a2 list -> 'a2

(car_1 nss) => 'b = 'a1 list

(car_2 (car_1 nss)) => 'a1 = 'a2 list

(car_2 (car_1 nss)) : 'a2

nss : 'b

: 'a1 list

: ('a2 list) listSo, cc : ('a2 list) list -> 'a2

Because 'a2 is unconstrained, we can generalize:

cc : forall 'a . ('a2 list) list -> 'a

*** Exercise: Give the type of cc ***

let val cc = (lambda (nss) (car (car nss)))

forall 'a . 'a list list -> 'aFormalizing Type Inference

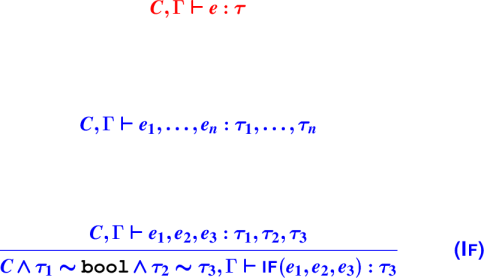

Sad news: Full type inference for polymorphism is not decidable.

Solution: Parameters have monomorphic types.

Consequence: Polymorphic functions are not first class.

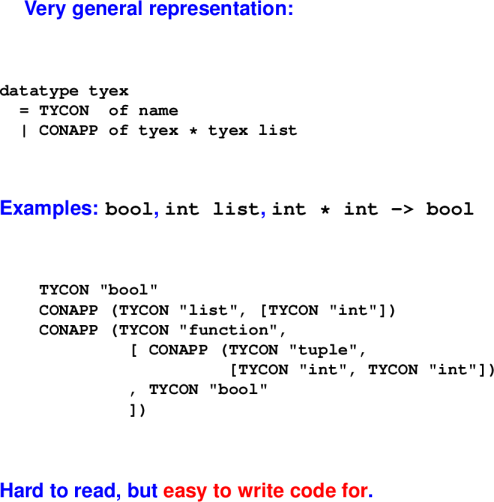

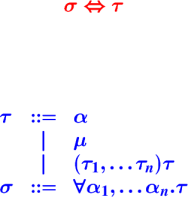

*** Hindley-Milner types ***

datatype ty

= TYCON of name

| CONAPP of ty * ty list

| TYVAR of name

datatype type_scheme

= FORALL of name list * tyKey ideas:

Key ideas:

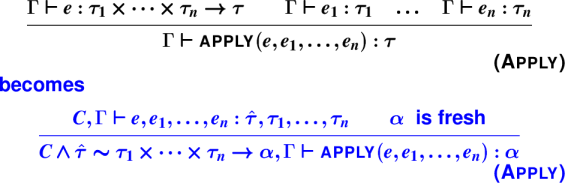

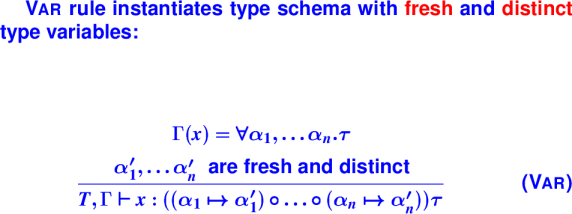

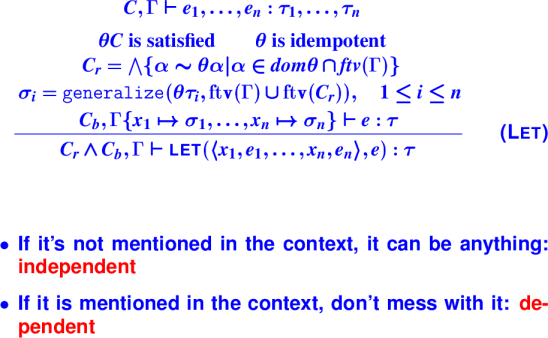

{Type inference}:

{Apply rule}:

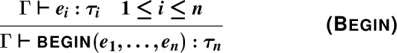

{Exercise: Begin Rule}:

{Exercise: Begin Rule}:

{Type inference, operationally}:

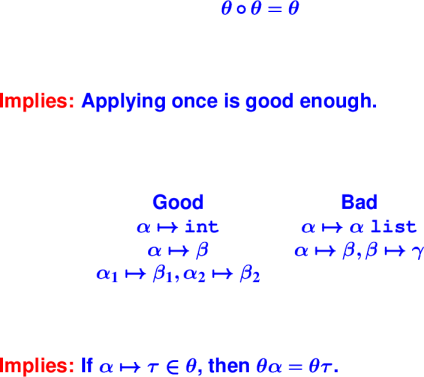

*** Representing Constraints ***

datatype con = ~ of ty * ty

| /\ of con * con

| TRIVIAL

infix 4 ~

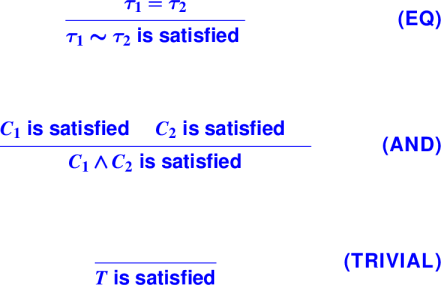

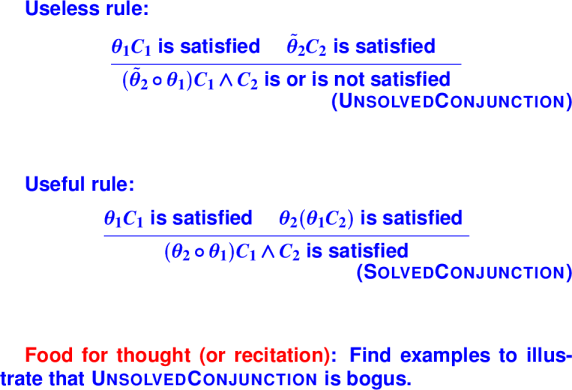

infix 3 /\{Solving Constraints}:

When is a constraint satisfied?:

{Examples}:

Board: which of these have solutions?

int ~ bool

int list ~ bool list

'a ~ int

'a ~ int list

'a ~ int -> int

'a ~ 'a

'a * int ~ bool * 'b

'a * int ~ bool -> 'b

'a ~ ('a, int)

'a ~ tau (arbitrary tau)Question: in solving tau1 ~ tau2, how many potential cases are there to consider?

Question: how are you going to handle each case?

{Solving Constraint Conjunctions}:

So far

After this lecture, you can write solve, a function which, given a constraint C, has one of three outcomes:

Returns the identity substitution in the case where C is trivially satisfied

Returns a non-trivial substitution if C is satisfiable otherwise.

Calls unsatisfiableEquality in when C cannot be satisfied

You can also write a type inferencer ty for everything except let forms. (Coming Wednesday)

Office hour visits

Type inference, continued

Moving from type schema to types (Instantiation)

Moving from types to type schema (Generalization)

Type Inference:

Key Idea:

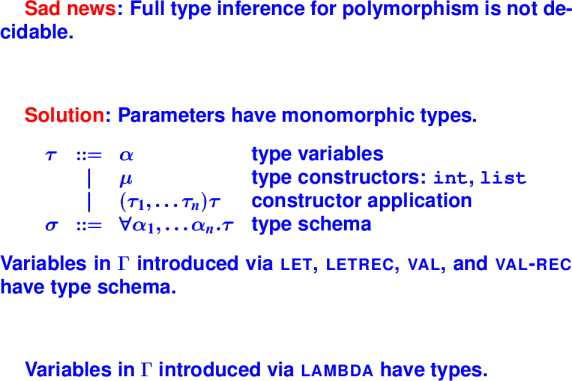

Judgement forms:

Formalizing Type Inference:

For variables introduced by let, letrec, val, and val-rec, we have the expression that gets the polymorphic type. For lambda, the value is the argument to the function and not known at type checking time.

Moving between type schema and types:

From Type Schema to Types:

Consider

Gamma = {fst : forall 'a 'b. 'a * 'b -> 'a, y : 'ay}

??, Gamma |- if (y, fst 2 3, 4) : ??Imagine we ignore the freshness constraint when instantiating fst:

fst : 'ay * 'b -> 'ayFrom if, we get the constraints:

'ay ~ bool

'ay ~ intwhich aren't satisfiable. Which means this code would be erroneously flagged as an error.

Correct typing:

'ay ~ bool, Gamma |- if (y, fst 2 3, 4) : intfst : 'a * 'a -> 'a

Gamma |- fst 2 #tApplication rule yields constraints:

'a ~ int

'a ~ boolwhich aren't satisfiable. Which means this code would also be erroneously flagged as an error.

Correct typing:

Gamma |- fst 2 #t : intFrom Types to Type Schema:

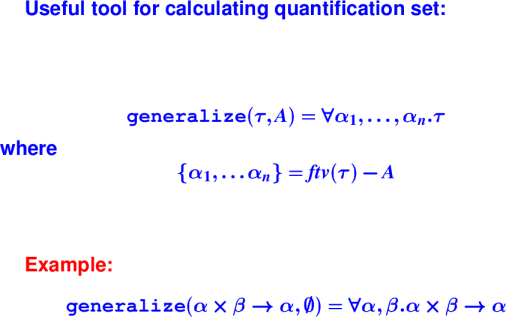

Generalize Function:

The set A above will be useful when some variables in τ are mentioned in the environment.

We can't generalize over those variables.

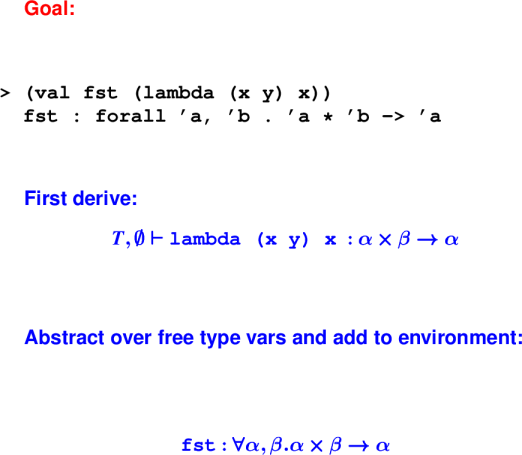

Applying idea to the type inferred for the function fst:

generalize('a * 'b -> 'a, emptyset) = forall 'a, 'b. 'a * 'b -> 'a

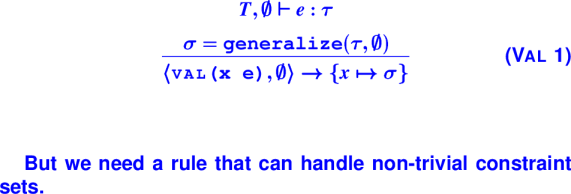

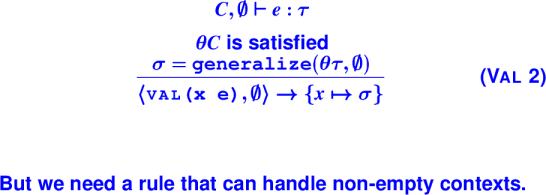

First Candidate VAL rule:

Note the new judgement form above for type checking a declaration.

Example:

Second Candidate VAL rule:

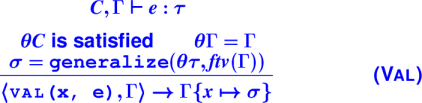

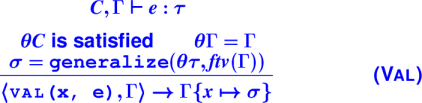

VAL rule:

On the condition ΘΓ = Γ: Γ is "input": it cna't be changed.

The condition ensures that Θ doen't conflict with Γ.

We can't generalize over free type variables in Γ.

Their presence indicates they can be used somewhere else, and hence they aren't free to be instantiated with any type.

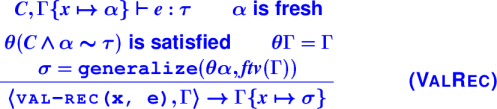

Type inference

letval-rec and let-recVAL rule:

Example of Val rule with non-empty Γ:

*** Let Examples ***

(lambda (ys)

(let ((s (lambda (x) (cons x '()))))

(pair (s 1) (s #t))))

(lambda (ys)

(let ((extend (lambda (x) (cons x ys))))

(pair (extend 1) (extend #t))))

(lambda (ys)

(let ((extend (lambda (x) (cons x ys))))

(extend 1)))Let:

Idempotence:

VAL-REC rule:

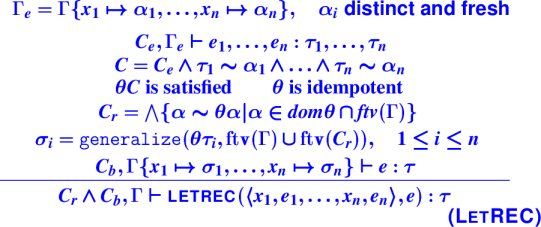

LetRec:

Forall things

val and val-rec |

let, letrec, ... |

lambda |

|---|---|---|

| FORALL contains all variables (because none are free in the context) | FORALL contains variables not free in the context | FORALL is empty |

| Generalize over all variables (because none are free in the context) | Generalize over variables not free in the context | Never generalize |

Splitting constraints for let rules

Inferring recursive types

Where have we been?

Success stories:

What about big programs?

An area of agreement and a great divide:

INFORMATION HIDING

/ \

/ \

reuse / \ modular reasoning

/ \

/ \

OBJECTS MODULESTwo kinds of reuse:

Don't conflate them!

We know that mixing code and data can create powerful abstractions (function closures)

Objects are another way to mix code and data

Pioneers were Nygaard and Dahl, who added objects to Algol 60, producing SIMULA-67, the first object-oriented language

Bjarne Stroustrup liked Simula but wanted complete control of costs, so he created C++

James Gosling wanted something a little cleaner and a little more like Simula, created Java

Microsoft funded C#

Objects are everywhere

Agglutination containing

Some mutable state (instance variables)

Code that can respond to messages (code is called methods)

A lot like a closure

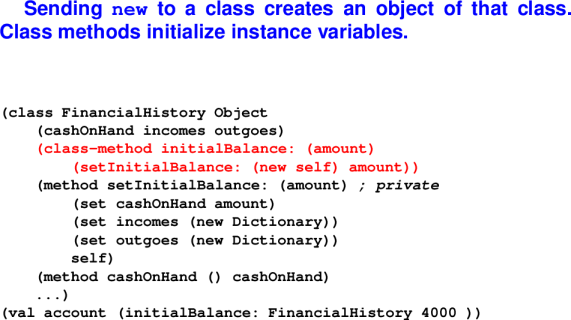

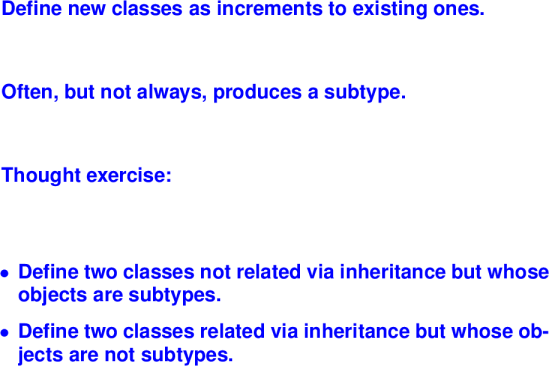

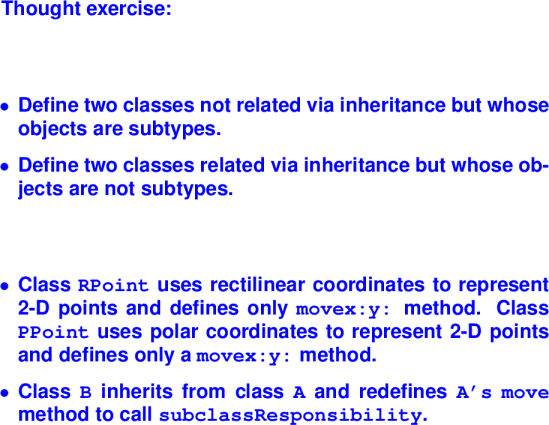

A class is a way to define objects. It provides a recipe for object construction, defining the methods (code) and specifying how to initialize the instance variables (state) via constructors.

Not really useful for building small things

If you build a big, full-featured abstraction, you can easily use inheritance to build another, similar abstraction. Very good at adding new kinds of things that behave similarly to existing things.

Programs that are evolving

A particular kind of evolution: operations stay the same, but we add new kinds of things

Example: GUIs (operations are paint and respond-to-mouse-click)

Example: numbers

For your homework, you'll take a Smalltalk system that has three kinds of numbers, and you'll add a fourth kind of number.

If you do anything at all interesting, your control flow becomes smeared out over half a dozen classes, and your algorithms are nearly impossible to understand.

Why Smalltalk?

Alive and well today

Values are objects (even true, 3, "hello")

Even classes are objects!

There are no functions---only methods on objects

N.B. BLOCK and LITERAL are special objects.

Slogan: Everything is an object.

Syntax:

mutable variables

message send

sequential composition of mutations and message sends (side effects)

"blocks" (really closures, objects and closures in one, used as continuations)

No if or while. These are implemented by passing continuations to Boolean objects.

(Smalltalk programmers have been indoctrinated and don't even notice)

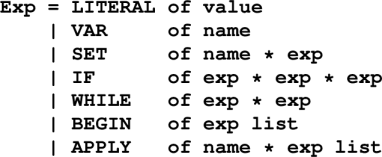

Impcore AST:

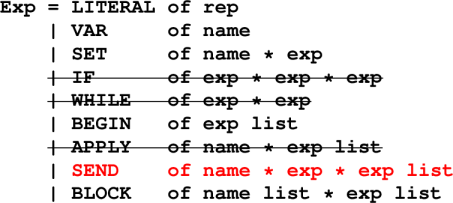

Smalltalk AST:

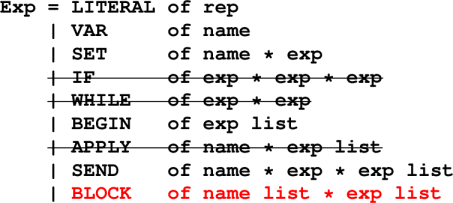

Smalltalk AST:

Environments

Name stands for a mutable cell containing an object:

Dynamic semantics

The initial basis is enormous

Types

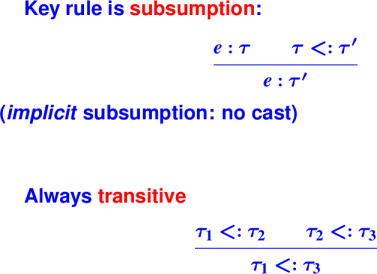

There is no compile-time type system.

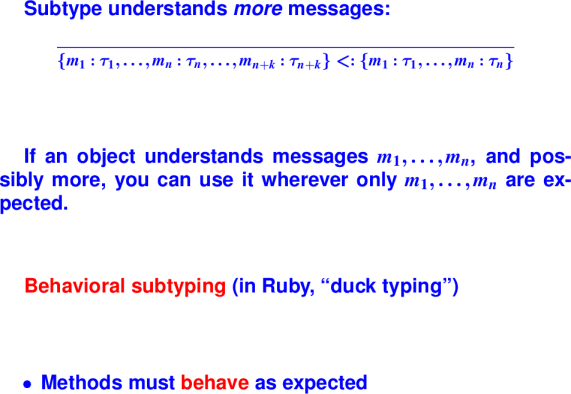

At run time, Smalltalk uses behavioral subtyping, known to Rubyists as "duck typing"

Look at SEND

Optional arguments must match arity of message name

(no other static checking)

*** Example: A bank account ***-> (use finance.smt)

Protocol is Smalltalk term of art:

Ruby dialect "duck typing" is a statement about protocols

Protocol is determined by the class of which an object is an instance

Arities:

Every object gets not just the protocol but the implementations from its class. And a class can inherit from its superclass (more undefined terms) all the way up to class Object.

*** Simple examples ***

-> true

<True>

-> True

<class True>

-> Object

<class Object>

-> (isNil 3)

<False>

-> (isNil nil)

<True>

-> (println nil)

nil

nilBlocks are closures

(block (formals) expressions)

For parameterless blocks (normally continuations), [expressions]

Blocks are objects

You don't "apply" a block; you "send it the value message"

*** Block Examples ***-> (val twice (block (n) (+ n n)))

Booleans use continuation-passing style

Blocks delay evaluation

*** Boolean example: minimum ***-> (val x 10) -> (val y 20) -> (ifTrue:ifFalse: (<= x y) {x} {y}) 10

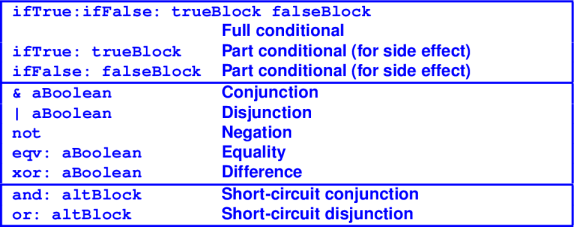

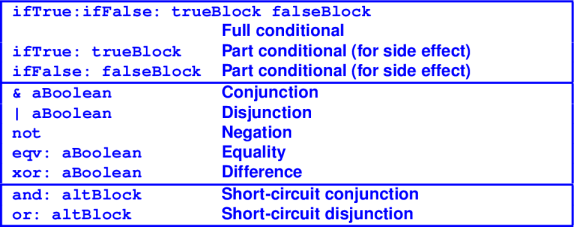

Protocol for Booleans:

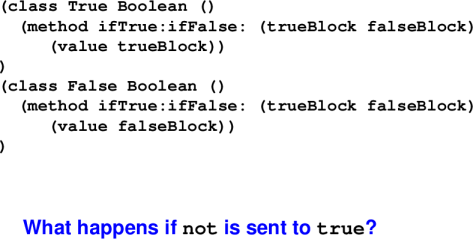

Booleans implemented with two classes True and False

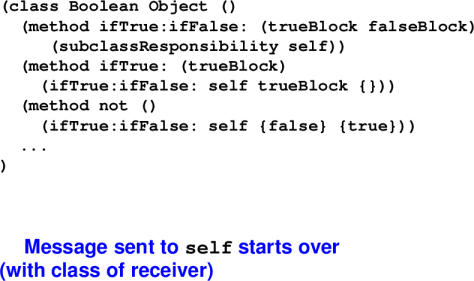

Classes True and False:

Protocol for Booleans:

Board - Method dispatch

To answer a message:

Consider the class of the receiver

Is the method with that name defined?

If so, use it

If not, repeat with the superclass

Run out of superclasses?

"Message not understood"

{ message dispatched to class }:

*** Blocks manage loops ***

-> (val x 10)

-> (val y 20)

-> (whileTrue: {(<= x (* 10 y))}

{(set x (* x 3))})

nil

-> x

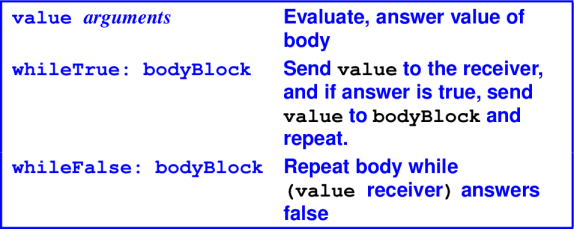

270Protocol for blocks:

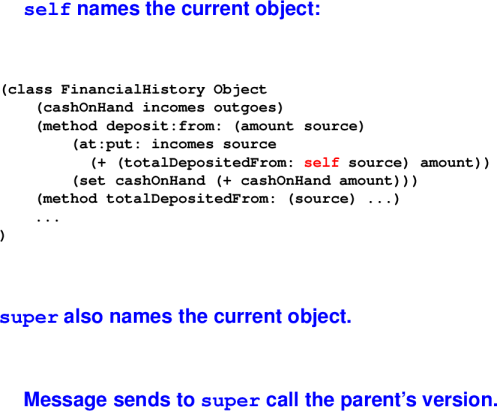

{Special variables self'' andsuper''}:

Object initialization:

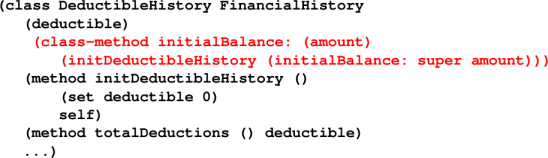

The DeductibleHistory subclass records tax deductions.

Object initialization with a subclass:

Double Dispatch

Typical: Executed code depends on class of the receiver

Problem: What if code should depend upon the class of both the receiver and the argument?

Solution: Method name encodes the operation and the type of argument

Consider algorithms to add an Integer and a Float.

Integer FloatInteger int + mixed +

Float mixed + float +

By sending a message, we can select code according to the class of the receiver, but not the argument. By sending a second message with the class of the first receiver encoded in the name, we can select code according to both.

Double Dispatch Example:

Messages such as

addFloatTo:encode the argument class in the method name.

In class Float:

(method + (arg)

(addFloatTo: arg self))In class Integer:

(method addFloatTo: (aFloat)

(<<code to add an Integer and a Float>>)Consider evaluation of:

(+ 5.5 3)Sends + message to object 5.5 with argument 3

Sends addFloatTo: message to 3 with argument 5.5

Code to add an Integer and a Float is executed.

Goal of objects is reuse

Key to successful reuse is a well-designed class hierarchy

Killer app: toolkits for building user interfaces

Smalltalk blue book is 90 pages on language, 300 pages on library

Lots of abstract classes

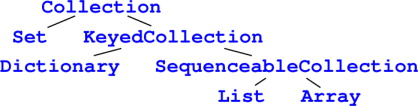

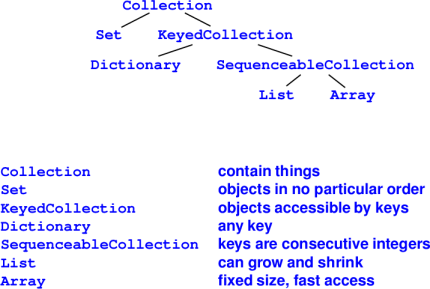

Boolean``Collection hierarchy'':

``Collections hierarchy'':

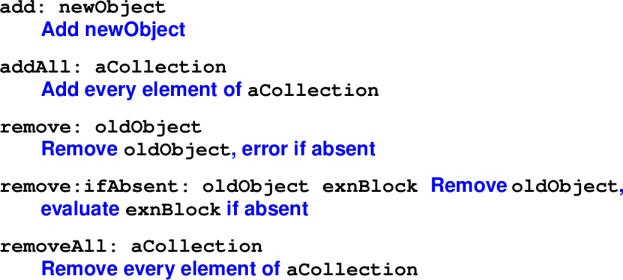

Collection mutators:

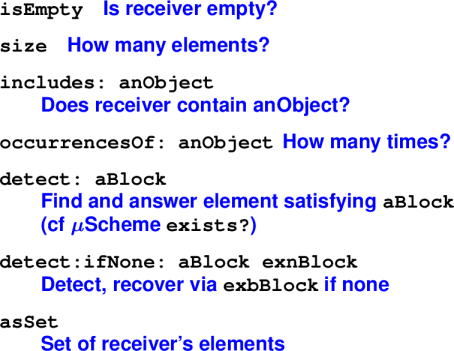

Collection observers:

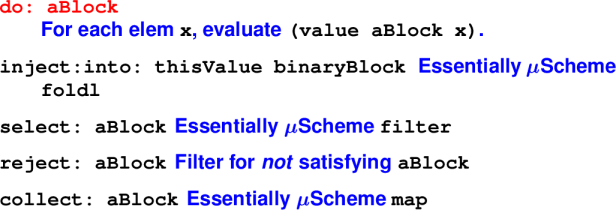

Collection iterators:

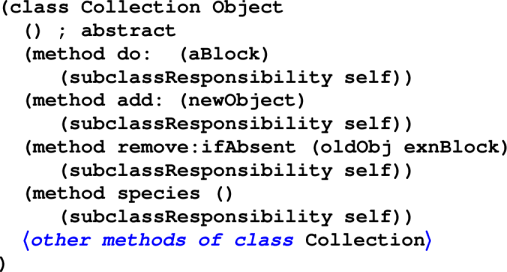

Implementing collections:

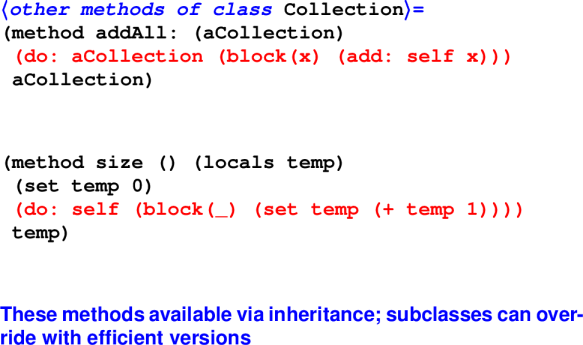

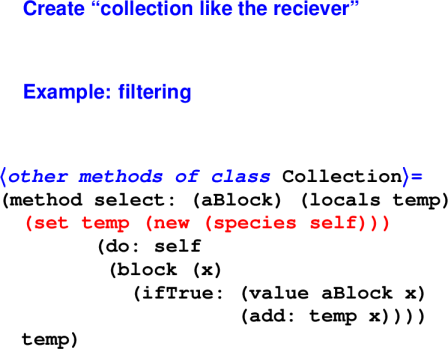

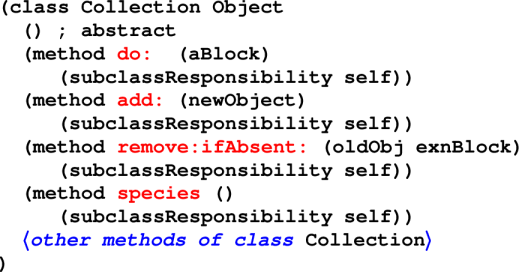

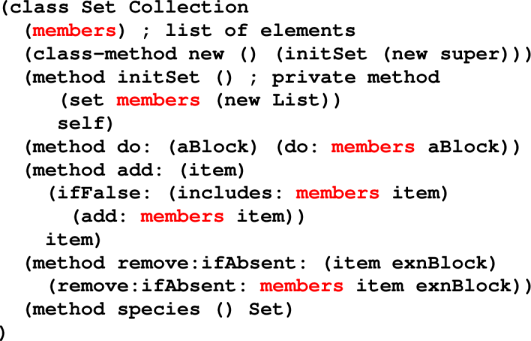

Reusable methods:

Question: what's the most efficient way to find the size of a list?